Hannes Heikinheimo

Sep 19, 2023

1 min read

In this post

As long as general artificial intelligence doesn't exist (and it won't happen soon), any computer system needs a way of interacting with its users. With the user interface the user gives commands to the computer system and gets information back from the system.

For example, if an application can calculate the sum of two numbers, it will need a way to get these numbers from the user. A typical calculator solves this problem by presenting a user with digits from 0 to 9 either in physical form or as touchable and clickable buttons.

The application needs also a way to show the results of this calculation to the user. A calculator most often has some kind of a digital screen for showing this information.

A user interface can be judged by three main properties:

The main types of interfaces are:

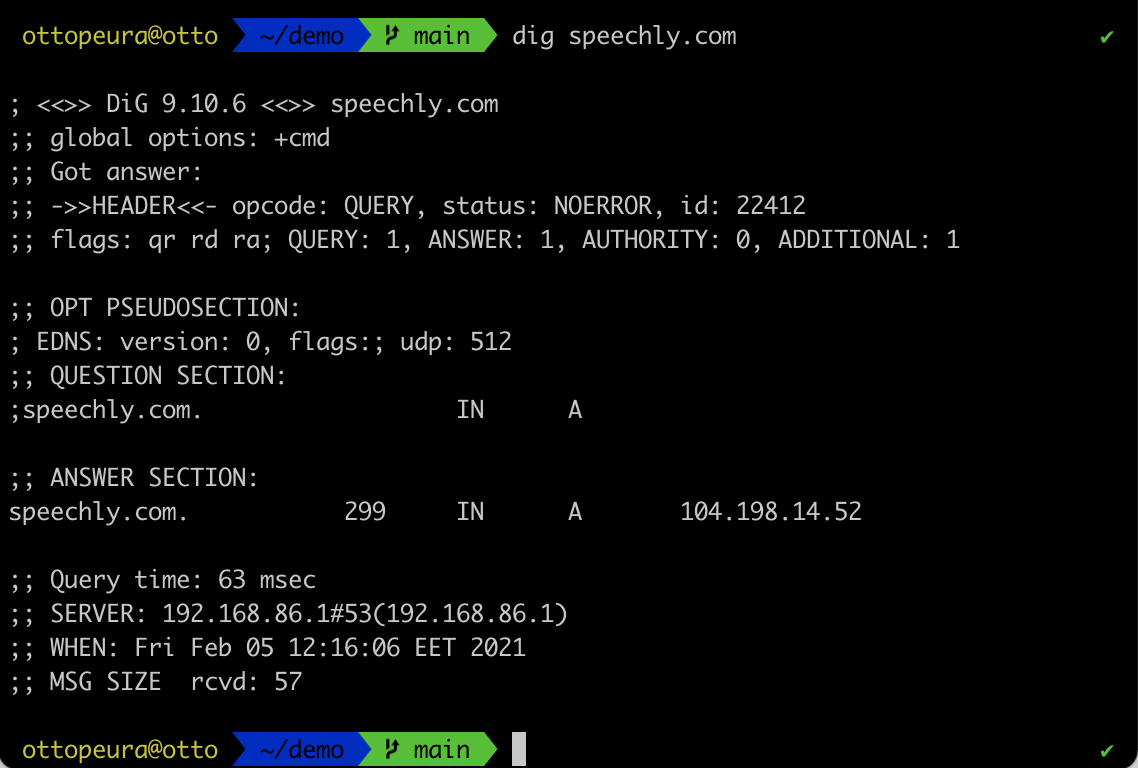

Command line interfaces process commands to a computer program in the form of lines of text. Examples of CLIs include UNIX systems, DOS and Mac OS Terminal app.

Command line interfaces are typically unintuitive and have a significant learning curve, but once the user masters them they can be a powerful and easy to automate through scripting. They can be used to create very efficient user interfaces, but can be difficult to learn.

Graphical user interfaces, sometimes referred to as WIMP (Windows, Icons, Menus and Pointers) are user interfaces that use pointer (most often a mouse or a finger) to interact with elements on the screen.

Nearly all modern user interfaces support some kind of graphical user interfaces. While there's nothing intrinsically intuitive in using a mouse to click buttons, after a user has used some GUIs, learning new ones is often pretty easy. For example, if the user has used Words on Windows, using Google Docs on a Linux should be somewhat easy.

Scripting and automating graphical user interfaces is difficult. Using mouse is not very ergonomic and touching is not very accurate. This is why graphical user interfaces are not always the best option for building efficient user interfaces.

Menu driven interfaces are kind of graphical user interfaces that consists of a series of screens which the user navigates through by choosing options from a list.

Because menu driven UIs are simple, they are often used in walk-in terminals, such as ATMs. Each screen has one simple question or functionality and means to interact with it. They are intuitive, easy-to-use but inefficient.

Voice user interfaces are user interfaces that are used through speech. Typical examples of voice user interfaces include smart speakers and voice assistants.

Voice UIs employ speech recognition and natural language understanding technologies to transform user speech into text and meaning. Speechly is a tool for enhancing traditional touch user interfaces into multimodal voice user interfaces, but let's get back to that a bit later.

Voice user interfaces are highly intuitive as they use the most natural way for us to communicate: speech. They are significantly faster than typing to input information but significantly slower than reading or seeing to output information from the computer system back to the user.

User interfaces can be divided into many different classes. Some other types of user interfaces include form-based user interfaces that use text input boxes, dropdown menus and buttons to simulate a paper form.

Text-based natural language user interfaces, such as chatbots can be thought as a subset of voice user interfaces. They are almost like voice UIs, but employ text rather than voice. Quite contrary to voice user interfaces, they can be slow to input information but can be faster to output it.

Some call voice user interfaces and text-based natural language user interfaces Conversational User Interfaces (CUI), but it is important to note that voice user interfaces do not need to be conversational. For example "Turn off lights" can be seen as a voice command that does not need any kind of conversation back from the computer system. The user does not want to conversate, but rather shut down the lights.

While smart speakers have brought voice user interfaces to many living rooms, voice UIs are not a particularly new innovation. The first voice user interfaces were IVR, Interactive Voice Response, systems that enabled users to interact with a phone system by using speech. Typically IVRs recognized only digits, but nonetheless they were early voice user interfaces.

The other type of voice UIs is of course smart speakers and voice assistants that recognize all kinds of natural language. They are general purpose digital aides that mainly use voice as an output method back to the user.

Voice command devices (VCD) are devices such as home appliances that can be controlled by voice. These devices can be controlled with simple voice commands. Typically a VCD only understands a dozen or so commands.

The fourth type of voice UIs are multimodal user interfaces that can employ all means of human-computer interaction; voice, touch, vision and tactile. These user interfaces are not general-purpose, but built for a specific application and used in that context. Voice commands can be used in this kind of applications for all or some user tasks.

| Type | Purpose | Output channel | Input channel | Use case |

|---|---|---|---|---|

| IVR | Specific | Voice | Voice | Narrow |

| Smart speakers | General | Voice | Voice | Wide |

| Voice command device (VCD) | Specific | Voice, display | Voice | Narrow |

| Multimodal voice UIs | Specific | All | All | Wide |

You can read more about benefits of multimodal voice UIs in this article.

User interfaces can be either good or bad. Good user interfaces are the ones that user doesn't think that much. They work intuitively, respond to commands fast and give back results quickly.

Good UIs are intuitive, concise, simple, and aesthetically pleasing. They give enough feedback to the user, tolerate errors such as misclicks or typos, and use familiar elements so that they are easy to use even for new users.

The bad ones – well, they are hard to use, slow and unintuitive. They are the ones that even if the user has used them a million times, they still don't know how they work and why they work like this.

Different user interfaces have different properties and hence the user interface should be designed for each use case of an application. User interfaces should not be thought as binary selections but rather as modalities that are employed differently.

There's no such thing as the "best" user interface. User interfaces should employ all possible modalities and use different kinds of user interfaces for different user tasks to support different use cases.

By using the three properties of user interfaces laid out in the beginning of this post, let's consider how different user interfaces fare.

Multi-modality means that a user interface supports different interaction modalities simultaneously. For instance, a graphical user interface can be scripted through a command line user interface. Or maybe the user uses voice commands to input information but gets them back visually on a screen.

| Type of user interface | Learning curve | Efficiency for input | Efficiency for output | Discoverability |

|---|---|---|---|---|

| CLI | high | high | low | low |

| GUI | medium | medium | high | high |

| Menu driven UI | low | medium | medium | high |

| Voice UI | low | high | low | low |

Because a menu driven user interface has a very low learning curve, it is a great option for the initial screens of an application. Very often menu driven user interfaces are indeed used for the initial user onboarding.

Voice user interfaces on the other hand are very fast at inputting information and require minimal focus, so they are great for repetitive tasks and information heavy data input.

Graphical user interfaces are great at displaying information, so they should be employed for that.

Best user interfaces support multiple interaction modalities: voice, touch, vision simultaneously for intuitive and efficient user experience

Search filtering is one use task where it's very intuitive to use voice commands such as "show me green t-shirts", but it would be horrible if the machine answered back by using voice. Rather the results should of course be visibly shown, just like the user asked.

Because smart speakers only employ voice modality, they are not very efficient user interfaces and don't work well but for simple user tasks. A smart designer chooses the best user interface for each tasks. For instance, if the user task is to select a color from three options, a touch user interface or a menu-drive user interface works great. But if the user task is to add their daily meals to an application or input information to a CRM, there's no better option than voice.

The first applications of voice UIs were interactive voice response (IVR) systems that came into existence already back in the 80s. These were systems that understood simple commands through a telephone call and were used to improve efficiency in call centers. Last time I encountered one was a customer support call center for home appliances where a synthesized voice asked me to say the name of the manufacturer of the device I needed help with. Once it recognized correctly my utterance "Siemens", it directed me to a correct person.

Current voice user interfaces can be a lot smarter and can understand complex sentences and even combinations of them. For example, Google Assistant is perfectly fine with something like "Turn off the living room light and turn on the kitchen light".

However, as these smart speakers always wait until the end of the user utterance and only then process the information and act accordingly, they will fail if the user hesitates with their speech or says something wrong. For instance, you can try something like "Turn off the kitchen light, turn on the living room TV, turn on the bedroom and terrace lights and turn off the radio in the kitchen."

On the other hand, Speechly processes user input from the beginning and returns with actionable results in a streaming fashion. This enables arbitrarily long utterances with multiple intents and entities. You can try our home automation demo with as complex utterance as you want and it won't fail and it will give the user real-time visual feedback. This feedback is the key in great user experience. You can try the demo here

While user interfaces that use voice are intuitive once you know what they are supposed to do, typical problems with voice user interfaces are to do with exploration. Let's say you find yourself closed in a small room that has a mirror on one side and seemingly similar metal panels on every other wall. That might be a cause for panic – or it's a voice-enabled elevator.

If the user doesn't really know what the system is supposed to do as is the case with almost every new mobile application you install, the best way to learn it is by clicking through menus and dialogs (or by reading the documentation!). While you do it, you may find buttons that you were not expecting to find but that might seem interesting or helpful. Then you try them out and hopefully learn how the system works.

A system that only has a voice user interface (for example smart speakers), on the other hand, doesn't support this kind of usage. You can only try saying things and it might or might not do what you were expecting. You can try saying "can you reserve me a table in a seafood restaurant" for your smart speaker, but the answer is either along the lines of "I don't understand" or in the best case something like "You'll need to login to do that. Please open application XYZ on your mobile phone and set up voice."

And even if that worked, it doesn't necessarily mean that the smart speaker can also get you an Uber or flight tickets. You'll have to try each of these individually and learn. If you are building a voice user interface to your application, you should really think about how you support feature discoverability in your application.

The second issue with voice-only user interfaces is slowness. Let's say you want to know what happened in NBA in the last round. Saying out loud results for each of the 15 games will take some time. While voice is the most natural way for us humans to interact, it's nowhere near the fastest way of consuming information. On the other hand, voice is faster than typing when inputting information.

This is why we at Speechly don't believe the current smart speakers and voice assistants employ voice UIs the right way. Our approach is what we call multimodal Spoken Language Understanding. The cornerstone of that is the interplay of touch user interface and voice.

We believe voice user interfaces should support multi-modality. Multi-modality means that a user interface supports more than one user interaction modality, for example, vision, touch, and voice. Now getting those NBA results will become a lot nicer: say "show me the latest NBA results" give you a screen that shows the results. It's easy to skim them through, a sudden loud sound from distance doesn't break your experience and you don't have to wait until you hear the result for your favorite team.

The other important thing is real-time visual feedback. If the system only processes information in the end of the utterance, the user needs to get their utterance right or the query will fail and the user knows this only after they've stopped speaking and are expecting an answer. Speechly returns results in real time, which enables natural corrections and encourages the user to go on with the voice experience.

While adding a voice user interface can improve the user experience and make the application more efficient, it's not a silver bullet that'll turn a bad application into a good one. Just like any user interface, it needs designing and thinking through. We offer best practices for creating efficient multi-modal voice user interfaces and our Developer Support team is ready to help you when designing your application.

User interface is an important part of any application. It should be created to be as simple and efficient as possible. Most often than not, it's not possible by only leveraging one type of user interfaces. Rather, the application should leverage the best parts of all types of user interfaces. We call this multi-modality.

Multi-modal voice user interfaces are applications that take in voice commands and display information back to the user on a screen. Multi-modal voice UIs don't need a wake word (but can use one, of course!) but can employ a push-to-talk button for improved privacy and low latency.

Multi-modal voice user interfaces don't suffer from discoverability issues, because the screen directs the user and helps them understand what the application can do. An example of this can be seen in the video below.

The user sees a form asking for typical information for a flight booking use case. After seeing this, the user pretty much knows what the application can do and then they can use natural language to interact with the form. They can fill the form in any order and they can correct themselves naturally as they can see the result on their screen in real time.

Multi-modal real-time voice user interfaces can be leveraged in all apps, but most apps should not be made voice-only as is the case with the current smart speakers. Voice user interfaces can work great in professional applications and consumer applications alike.

Examples of professional applications that can benefit from voice UI include CRMs with a lot of repetitive data input, voice picking in warehouses and other professional applications where data quality is important.

You can see some examples of the use cases for voice user interfaces in our Use cases -section.

We have successfully solved issues with voice user interfaces with many of our customers. If you are interested in building voice user interfaces, leave your information to the form below and we'll get back to you as soon as possible!

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read