Hannes Heikinheimo

Sep 19, 2023

1 min read

Blog

The 2023 Xbox Transparency Report is (likely) around the corner. Our first blog broke down how the moderation process works at Xbox, but this blog will take a deep dive into the data from the inaugural report comparing Reactive vs Proactive moderation.

Xbox’s Transparency Report from the first half of 2022 reported over seven million actions taken against players for inappropriate content, comments, or activity. Last week’s blog post focused on the qualitative elements and the moderation process details included in the report. Today we go deep into the data.

The key headline is that incident actions rose over previous periods while player reports (complaints) fell. Xbox presents this as largely the result of increased proactive moderation activities. That is likely true in large part. However, there are some questions we had about the data that revealed interesting insights you will not find in the Transparency Report or the media coverage.

Before we get into the data, some additional context will be helpful. Xbox highlights in the report that it has shifted more of its moderation activity to proactive methods to complement legacy reactive processes. The report comments:

“To reduce the risk of toxicity and prevent our players from being exposed to inappropriate content, we use proactive measures that identify and stop harmful content before it impacts players. For example, proactive moderation allows us to find and remove inauthentic accounts so we can improve the experiences of real players. For years at Xbox, we’ve been using a set of content moderation technologies to proactively help us address policy-violating text, images, and video shared by players on Xbox… If content that violates our policies is detected, it can be proactively blocked or removed.”

You can see in the text that Xbox has implemented proactive moderation to identify “inauthentic accounts” and for screening “text, images, and video.” Note that voice chat and audio are not mentioned. This is not surprising. Tooling for text and video policy violations is mature and could be considered a basic standard of care. It is good that Xbox is working on improving these, but the absence of a mention of voice chat or audio confirms that this is largely still using “reactive moderation” practices.

“Proactive blocking and filtering are only one part of the process in reducing toxicity on our service. Xbox offers robust reporting features, in addition to privacy and safety controls and the ability to mute and block other players; however, inappropriate content can make it through the systems and to a player.”

The “controls” offer “Child, Teen, and Adult” settings as well as some customization options. Muting and blocking offer additional controls:

“If another player engages in abusive or inappropriate in-game or chat voice communications, you can mute that player. This prevents them from speaking to you in-game or in a chat session.

“Blocking another player prevents you from receiving that player’s messages, game invites, and party invites. It also prevents the player from seeing your online activity and removes them from your friends list, if they were on it.”

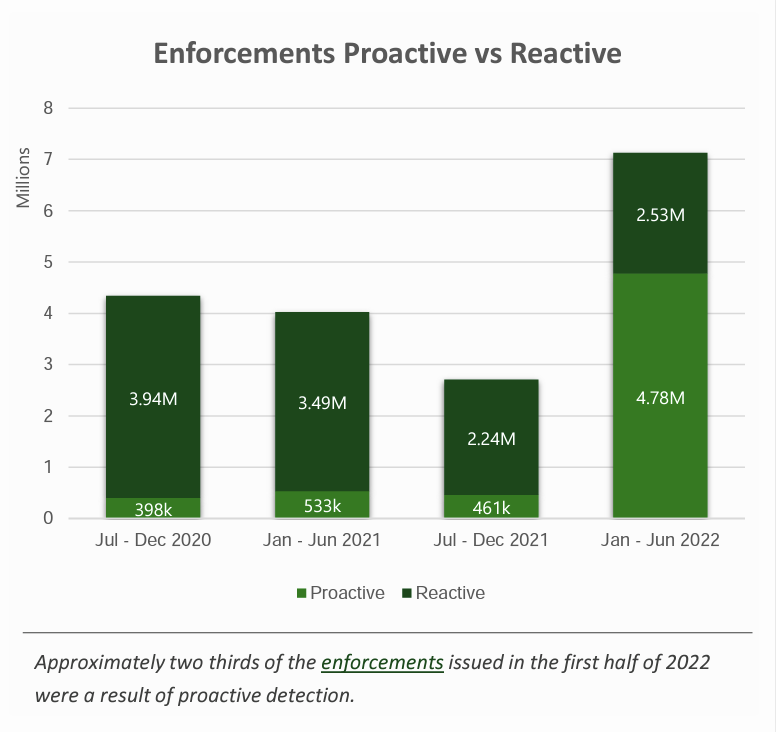

The most striking data from Xbox’s transparency report is the 10x rise in proactive moderation enforcement. The figure in the first half of 2022 was 4.78 million compared to 461,000 in the previous six-month period.

Interestingly, the reactive moderation figures did not show significant change and were slightly up from the previous period. The second half of 2021 showed 2.24 million reactive moderation enforcements, and the figure rose to 2.53 million in the first half of 2022. This most likely means that the rise in “proactive moderation enforcement” is not displacing existing “reactive moderation enforcement” but instead represents issues that previously went unnoticed.

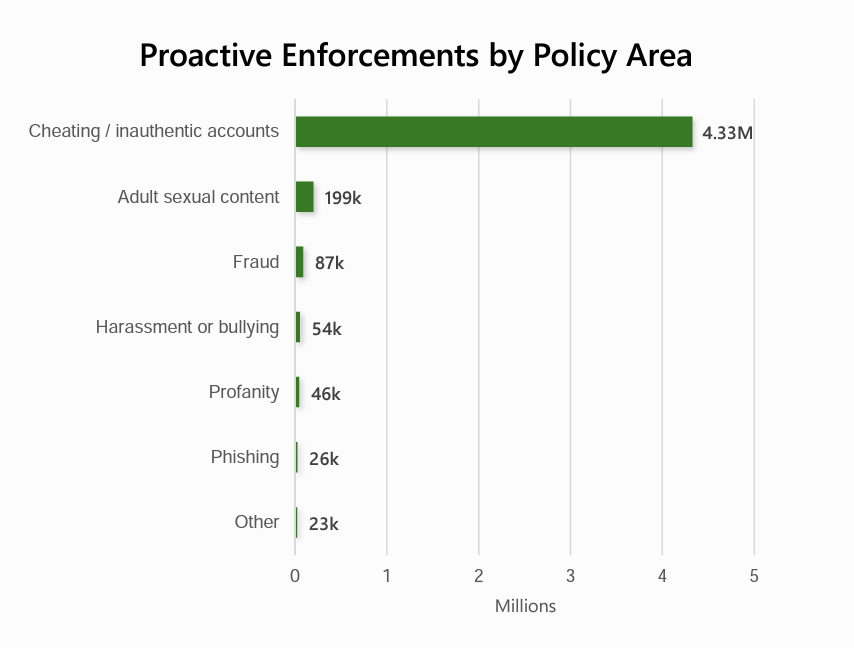

Looking into the data further, you see that 91% of all proactive enforcement was related to “Cheating / inauthentic accounts.” Only about 6% was related to toxic behavior.

Cheating is clearly a big issue for many game makers that undermines the experience for honest players. It is good that Xbox is making progress on this issue. However, you should not look at the proactive moderation numbers and conclude that big strides have been made in reducing toxicity. Proactively identifying 199,000 sexual content incidents, 54,000 harassment and bullying incidents, and 46,000 unwanted profanity incidents may also indicate important progress. However, it seems likely that Xbox is seeing only a small percentage of the toxic behavior problems.

Speechly’s consumer and industry research suggests that only 10% - 18% of voice chat toxic behavior incidents are reported by players. That means 82% - 90% is completely invisible to game platforms. Proactive monitoring is the only way to have a full view of the scale, scope, and nature of the problem. And it appears that no proactive moderation is in place for voice chat today. What if Xbox could make the same progress on voice chat toxic behavior as it appears to have achieved with cheating? That could have a profound impact on player safety and experience.

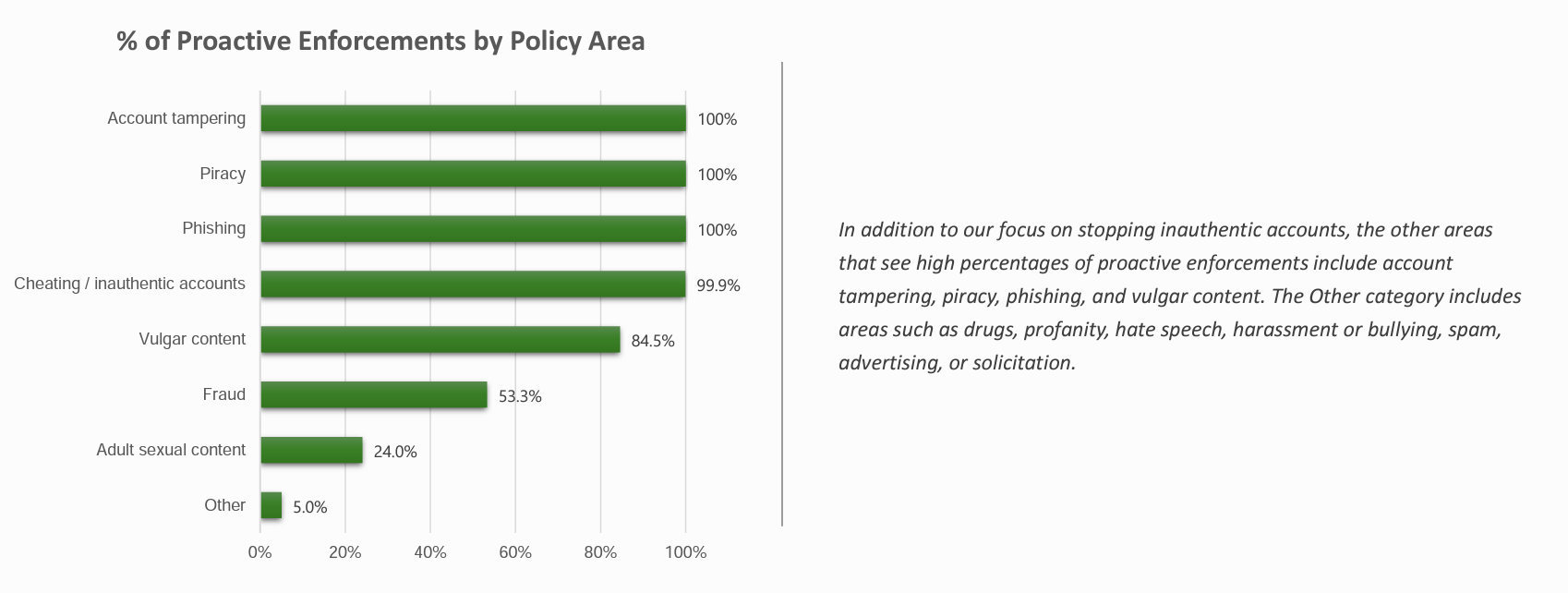

Xbox offers some insight into the scale of the problem in another chart from the report. The company says that proactive moderation only accounts for 5% of incidents related to “drugs, profanity, hate speech, harassment or bullying, spam, advertising, or solicitation.” So, proactive moderation of toxic behavior is a subset of that 5% category.

The implication here is that Xbox may, in theory, have visibility of up to 10% - 22% of toxic behavior in voice chat and is still missing the vast majority of the incidents. With that said, the data recorded in the chart is almost certainly related to only text chat and content uploads where the company has automated monitoring tools.

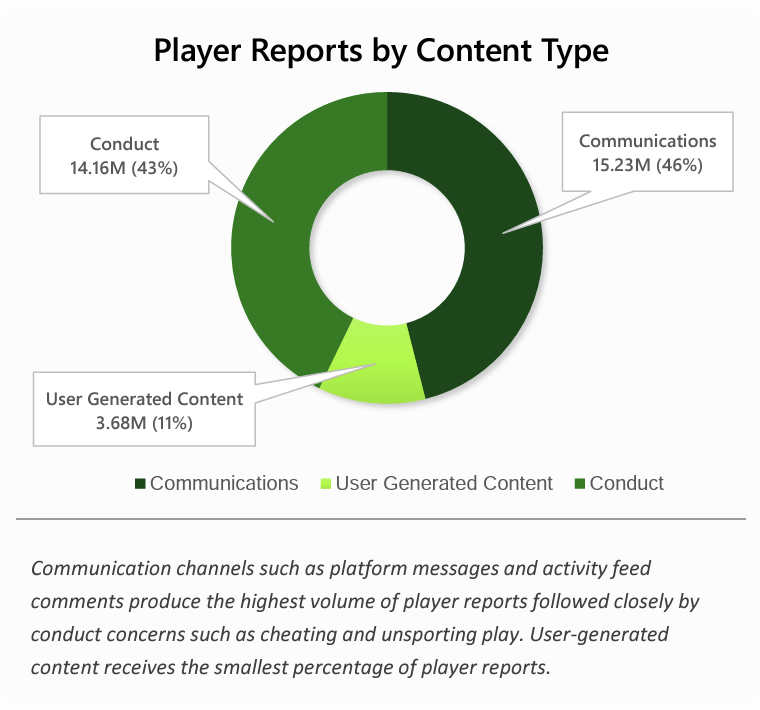

Xbox’s transparency report also shows that communications, such as voice and text chat, represent 46% of all player reports complaining about the activity of another user. Cheating and other “Conduct” related reports only represent 43% of complaints.

This offers insight into the consumer perspective on what has the biggest impact on player experience. They report “Communications” incidents–which are largely related to toxic behavior–at a higher rate than cheating.

It may be that these problems are easier for them to identify, but what is reported is the tip of the iceberg. Consider this: if all of the toxic behavior incidents were reported, this base figure would be 5-10x higher and potentially represent 81% - 89% of all complaints.

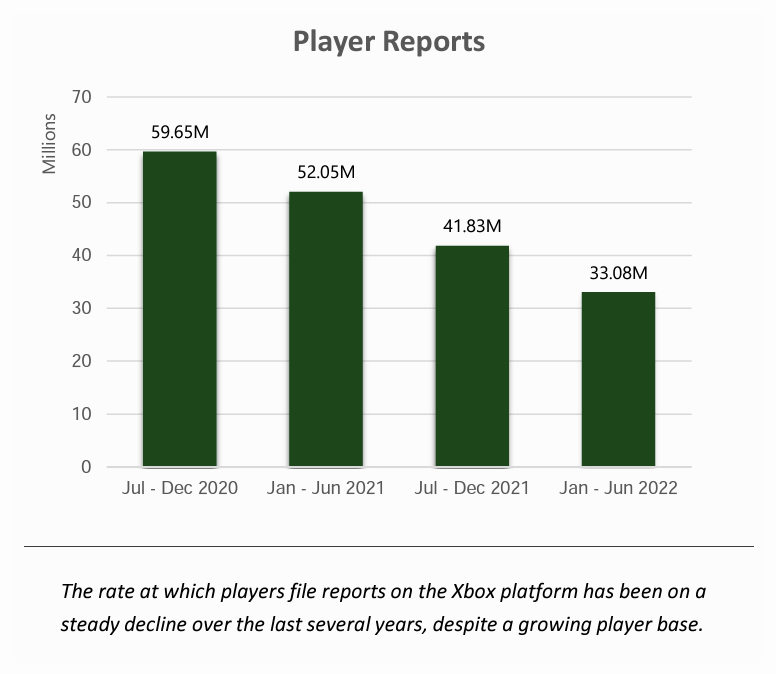

In addition, it appears that player reports are declining. Xbox showed that only 33 million reports were submitted in the first half of 2022. That is down from nearly 60 million in late 2020, 52 million in the first half of 2021, and 42 million in the second half of 2021. Is this a good thing?

Note that this decline began before Xbox’s reported rise in proactive enforcement. So, it is hard to claim a correlation between proactive enforcement and a decline in reports. What seems more likely is that Xbox’s proactive enforcement is finding issues that previously went unreported. That is a good outcome. It is unclear what led to the reporting decline, but this could mean that Xbox is missing more visibility than in previous years.

Transparency reports are based on measuring the problems and explaining the process the game maker uses to address incidents. This is an important development for the industry. Games are now significant social experiences, and everyone from social advocates to regulators is interested in learning more about the scale, scope, and nature of the problems that show up in games.

The first step is to measure the problem so you can make thoughtful steps to address issues that exist today and identify new issues when they arise. Xbox is taking the proactive step of providing measurement transparency, and we expect its efforts to represent the early stages of a new trend. The game industry will benefit further when more game makers follow suit.

Speechly can help you measure the problem of voice chat toxicity in order to develop a plan to mitigate the impact or provide an accurate representation in your transparency report. If you would like to learn more, you can contact us anytime here.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read