Hannes Heikinheimo

Sep 19, 2023

1 min read

Blog

A recent NYU report exposes how extremist actors exploit online game communication features. In this blog we expand on NYU's data and recommendations for maintaining safety and security in online gaming communities.

A report by the NYU Stern Center for Business and Human Rights highlights the prevalence of misogyny, racism, and other extreme ideologies in video game voice chat. The study suggests that even though the individuals spreading hate speech are a minority, they have a significant impact on gamer culture and real-life experiences.

A key reason for the significant impact is the susceptibility of gamers to such viewpoints, particularly due to the presence of impressionable young people and the lack of proactive moderation in online games.

This was followed by an analysis of what game makers are doing or not doing about the problem, as the case may be. The report reads somewhat like an indictment of the game makers' lack of action to address the problem. That seems a bit unfair, even though the core themes are on point. Still, it is a well researched and comprehensive analysis of the problem, and many of the findings align with Speechly’s own industry research.

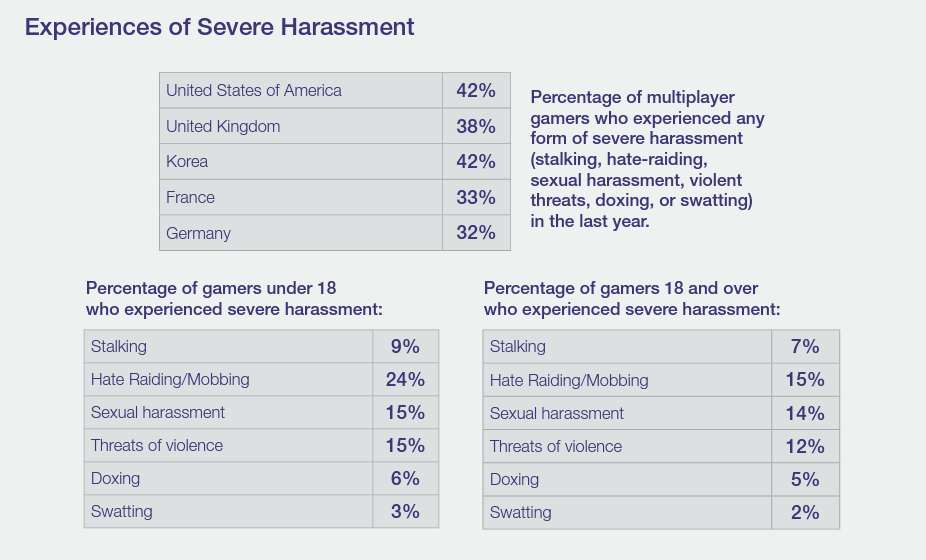

The survey was conducted in the U.S., UK, France, Germany, and South Korea. A key finding is that 51% of online players encountered extremist statements in multiplayer games over the past year. This tracks closely with Speechly’s consumer survey data, where we found that 53% of games had experienced a toxic incident in text chat and 49% in voice chat.

NYU says gaming companies claim to have taken steps to combat hateful content, including prohibiting extremist material and implementing detection systems to remove prohibited content. However, the report argues that the fast-paced nature of games and the sheer number of players make it challenging to monitor and regulate unlawful or inappropriate behavior effectively. It also suggests that many large gaming companies have been slow to take adequate steps to prevent the misuse of their platforms by bad actors, even though they have marketed and profited from features that are easily exploited without robust content moderation mechanisms.

Reactive moderation, which relies on user reports to identify and address problematic content, is an essential component of content moderation, according to the report. However, it is a flawed approach on its own because many users are either unable or unwilling to report troubling incidents.

NYU’s survey found that only 38% of respondents who experienced severe harassment while playing online games reported the incidents to game publishers or developers. This result is very close to the 36% figure that Speechly found in a larger U.S. survey.

This reliance on user reports is problematic and highlights the need for companies to improve their moderation capacity and investigate the reasons behind the low reporting rates. Speechly’s analysis also points to the fact that the underreporting situation is even worse than it might appear. The players that have reported incidents don’t report every incident. In reality, somewhere between 82% and 91% of incidents are never reported, and that means game makers have no idea they have even occurred.

With that said, shifting to proactive moderation is a challenging proposition for many game makers. NYU’s report is very good at laying out the goal and reasons for pursuing proactive moderation. However, this change has been slow to materialize due to the historical prevalence of solutions that are high-cost and inadequate. This is a key reason that game makers recruited Speechly to help solve these problems.

Speechly strongly recommends game makers implement proactive voice chat moderation. We also want to see them adopt solutions that actually perform well and don’t create a lot of additional work sorting through false positives and missed incidents. And it is in everyone’s interest to have solutions that are cost-efficient and don’t completely undermine the existing video game economic model.

To effectively combat extremist content, NYU recommends gaming companies invest in a combination of reactive and proactive moderation measures. Reactive moderation should involve the timely and reliable review of user-flagged content backed by clear explanations and appropriate enforcement actions. They say companies should leverage tools like AI-powered moderation platforms to scale up their reactive moderation efforts. However, certain issues can only be effectively managed by human reviewers, so companies must ensure they have enough in-house staff to promptly and reliably respond to user reports.

Proactive moderation, which involves detecting prohibited content before it is published or in real-time during a voice chat, is crucial in addressing the challenges of gaming platforms. On this point, the researchers suggest companies invest in automated detection systems and employ human investigators who use state-of-the-art tools, including large multilingual pre-trained datasets of extremist vocabulary.

Implementing proactive enforcement in gaming platforms is particularly challenging due to the instant and ephemeral nature of interactions. However, NYU researchers believe the industry should increase its investment in real-time or near-real-time proactive moderation technology. This would involve utilizing advanced tools and techniques to detect and address extremist content as it happens.

There seems to be a growing consensus that eradicating extremist content from gaming platforms is a complex undertaking. The industry may not be able to fully eradicate extremist encounters in its games, but it can take steps to better address the spread of extremist propaganda and promote a safer and more inclusive gaming environment.

This is exactly what we do here at Speechly. We build custom AI speech recognition and natural language understanding models to proactively identify toxic behavior in voice chat for game makers. We can conduct a quick analysis of your voice chat toxicity incidents, help you define a plan to mitigate the problem, and implement both reactive and proactive voice chat moderation solutions.

If you would like to learn more about our work with some of the highest-profile game makers, click the Contact Us button below to see a demo. You can also review a case study here.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read