Hannes Heikinheimo

Sep 19, 2023

1 min read

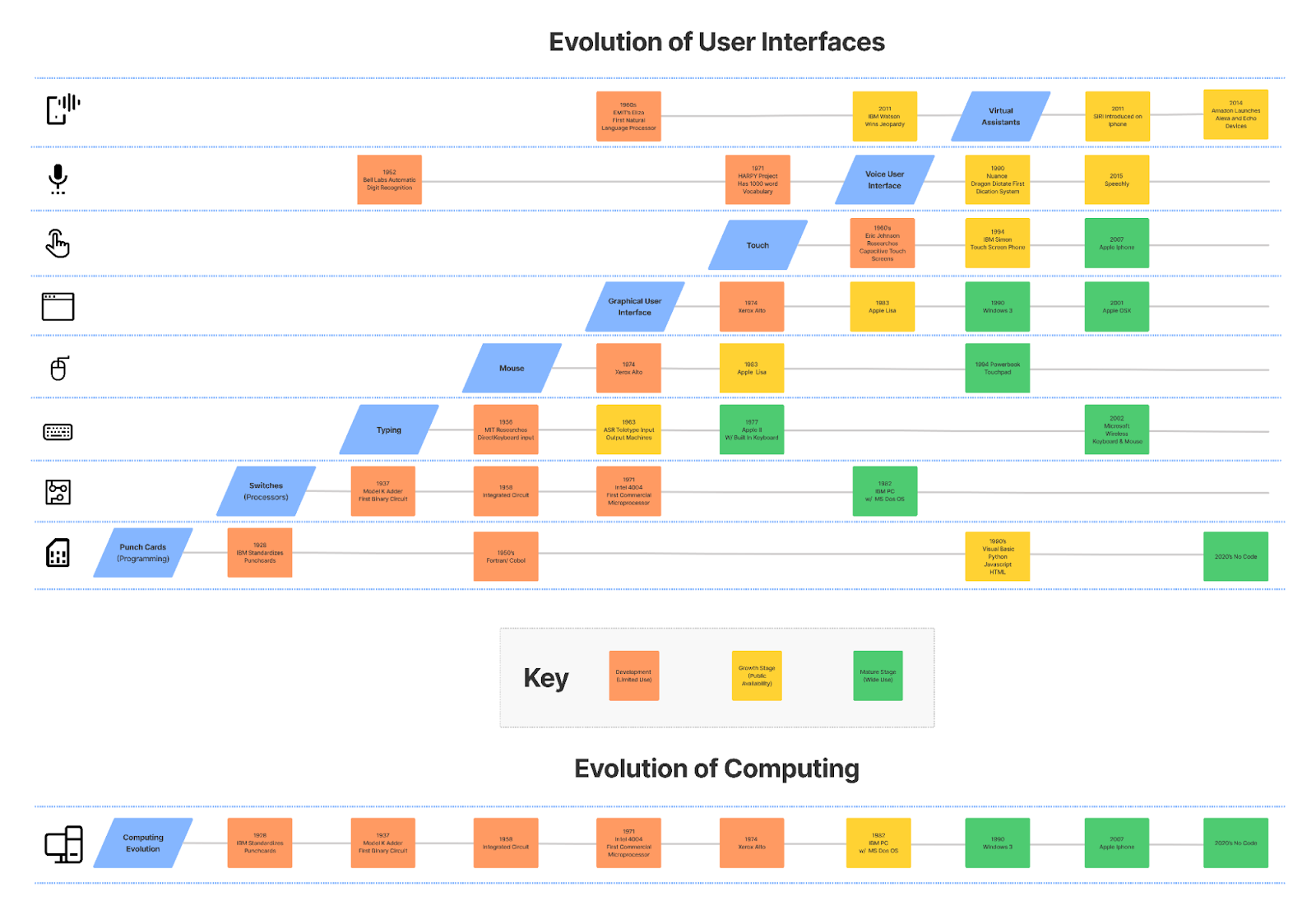

We have seen the User Interface (UI) for computers evolve rapidly since IBM punched cards became the dominant input/output medium for computers back in the 60’s. Fast forward to the 2020’s and we see a world dominated by two UIs, Point-and-click alongside typing for the computer and touch-and-swipe on the smartphone. While Voice Assistants have made an attempt to become the next UI in the evolution, this post will show how the next evolution is more likely to be Voice as a UI feature in computer and smartphone experiences.

User interfaces have evolved very logically over time. Punched Cards gave way to switches which in turn handed off to the first real open-ended input mechanism, typing. This was the dominant paradigm for nearly 20 years before the invention of the Graphical User Interface (GUI) and the mouse. However, it was a decade before that interface became common and nearly twenty years before the GUI clearly took over computing interfaces. That was nearly a 40-year run.

Touch interaction became the next big transition. It was first demonstrated in the 1960s, but it would also be 40 years before it found a true home in the smartphone. That was over a decade ago in 2008 with the iPhone and since that time there have been parallel dominant UIs. Point-and-click alongside typing for the computer and touch-and-swipe on the smartphone.

It seems obvious that voice technology will usher in the next important UI revolution. Thirty years ago Nuance debuted the Dragon dictation system. This type of technology eventually wound up in enterprise call centers and even automobiles within a decade. However, it still is not common for computers in either the consumer or business sectors. This is despite the fact that speech is 3-to-5 times faster than typing and it enables users to efficiently get what they want no matter what buttons or menus are available.

The real breakthrough came in 2011 with the introduction of Siri on the Apple iPhone. This new Voice Assistant category was seen as the next UI evolution. Siri and its competitors like Google and Samsung promised a human-like interaction when handling user requests. That proved to be a promise the Big Tech could not keep. Complaints about how the Voice Assistants didn’t work created a stigma around the solutions that took many years to shed. The Voice Assistant providers found themselves in the “Habitability Gap”, coined by Roger K. Moore. The “Habitability Gap” is a scenario where the closer a solution gets to humanlike conversational ability, the more usable they are until a certain point where the assistants cannot meet the user requirements causing interactions to fall apart.

Responding to consumer criticism, the leading smartphone companies focused their attention on Voice-enabling command-and-control features such as initiating a phone call, setting a calendar appointment, and asking for directions. These narrowly defined use cases proved far easier to execute consistently and began rebuilding consumer confidence in the interface. This is notable. In order to succeed, the technology had to revert backward along the flexibility continuum. The technology had improved a great deal, but was not ready to cross the gap.

Amazon’s introduction of Alexa in 2014 and Google’s alternative two years later muddied the waters further. Alexa was introduced to support an entirely new device without a screen. It needed to be more capable and conversational because there was no screen to fall back on when the user became stuck. Google Assistant followed and also decided to employ the same UI for smart speakers and Android-based smartphones.

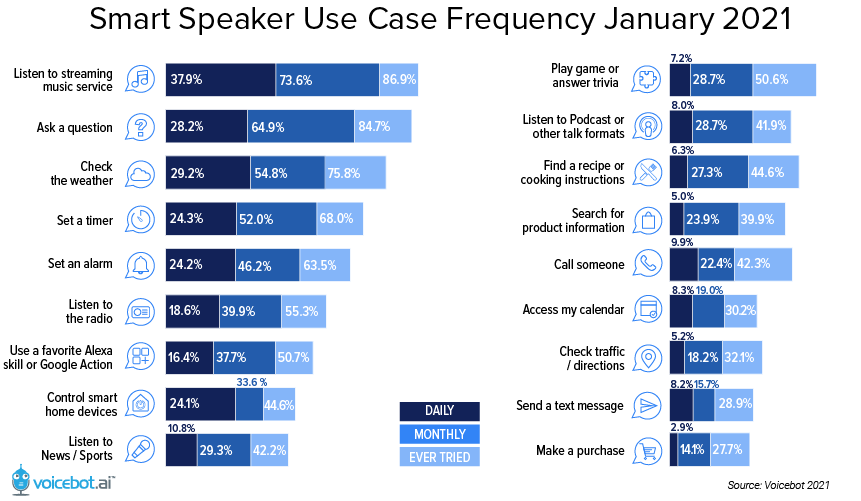

This furthered the rise of half-duplex systems where only one participant in a conversation, the human or the machine, can act at a time, while the other waits. Despite bold promises of humanlike conversational experiences and encouragement of 3rd parties to build these experiences, consumers use the features that provide the most value consistently. These are simple request-and-response interactions on smart speakers such as requesting music from a streaming service or radio station, asking simple questions, and setting timers.

This trend was also evident on smartphones where Alexa, Bixby, Google Assistant, and Siri jockeyed to be the favored Voice Assistant. The top Voice Assistant use cases to emerge on smartphones are asking questions, placing a phone call, sending a text, getting directions, and setting timers and alarms. Lofty Voice Assistant expectations that often land in the “Habitability Gap” have seen far less use than popular request-and-response features that live in the space just before the habitability cliff.

Even though consumers were clearly showing the technology providers what they wanted, the Voice Assistant stack was built to support far greater flexibility than was required. The Voice Assistants were over-engineered for the tasks consumers wanted to employ. It is no wonder that many website, web app, and mobile developers looked at Voice Assistants as overly complex and inadvertently applied that sentiment to the viability of all Voice UI Features.

The logical evolution from click and touch is to a much simpler Voice UI solution that helps users actually find the information they need and complete their intended tasks more efficiently, rather than making Voice into a new channel or platform. The ability to support natural language input and accurately identify user intent was the critical innovation. Multi-turn conversations turned out to be superfluous.

If you would like to learn more about the Speechly outlook on Voice UIs as a Feature vs a Channel, download our full white paper on “Voice UIs as a Feature vs Conversational Voice UIs”.

Learn how Voice UI features are outperforming Voice Assistants.

Cover photo by Eugene Zhyvchik on Unsplash

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read