Hannes Heikinheimo

Sep 19, 2023

1 min read

Blog

Speechly was recently featured in The Wall Street Journal. While it was an honor to be recognized by a prestigious publication, it is even more notable that voice chat toxicity in video games is being covered by a leading general business news publisher. People outside of online games are noticing the problem with voice chat in games.

Speechly was recently featured in The Wall Street Journal. Although it was an honor to receive recognition from such a respected source, what's even more noteworthy is that a prominent general business news publisher is shedding light on the issue of toxicity in video game voice chats. The fact that people outside the online gaming community are taking notice of this problem is significant.

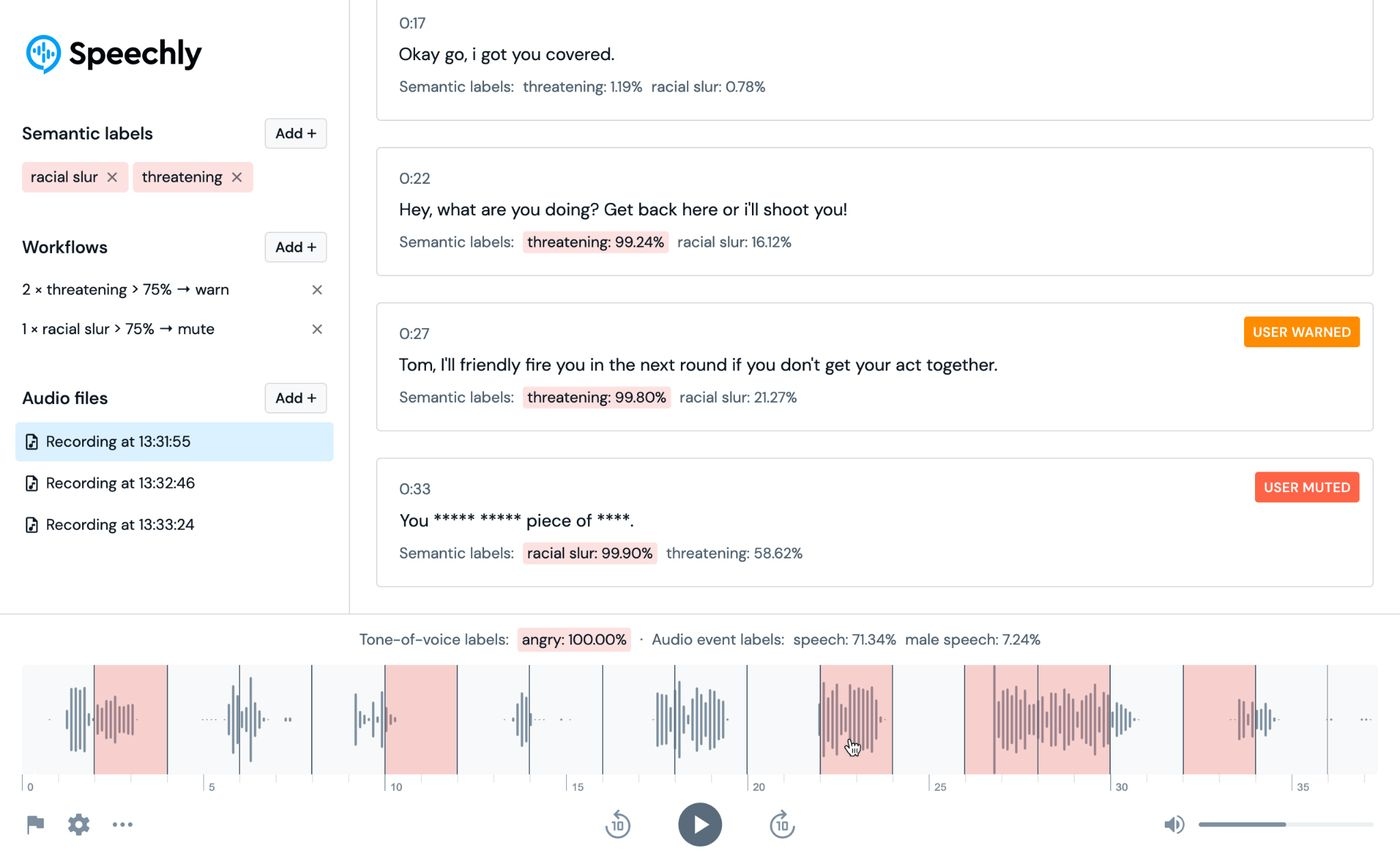

At the same time, it is clear that there is little understanding both inside and outside the gaming industry about the scale, scope, and nature of the toxicity problem in voice chat. The article says new AI technology can mute or ban players automatically, but this is an oversimplification of the problem, and the rules behind this type of solution are not trivial. A silver lining here is the suggested muting feature and interest in becoming more proactive about moderating voice chat toxicity.

Nearly everyone is surprised to learn that nearly 72% of in-game voice chat users have experienced a toxic incident. At the same time, nearly two-thirds of gamers that have experienced toxic behavior have never reported an incident, and even those that have do not report the events every time. According to The Wall Street Journal’s Sarah Needleman:

“Traditionally, game companies have relied on players to report problems in voice chat, but many don’t bother and each one requires investigating.”

Since game makers only know about toxic behavior when a player submits a complaint, few have any idea about how bad the problem is or how it manifests in their game community.

It’s not that surprising that people focus on real-time event flagging and the ability to intervene quickly. These features were not practical until just recently, and it is kind of magical to have the system do everything for you. However, we typically suggest that game makers first measure and analyze their voice chat for toxic behavior before implementing these solutions.

Our goal is to help game makers solve this problem cost-efficiently using the latest AI innovations, some of which are found in Speechly patents. However, we don’t assume the best course of action will be automated muting or banning toxic players or streamlining the investigation process for moderators. We look at the data and then customize our AI models to the game and its specific toxicity problems and apply it in the most effective way to meet the game maker's objectives. This could be a fully automated voice chat moderation solution, a tool to help flag & provide additional context for human moderators, or a combination of the two.

If you are a game maker and would like to talk to us about your voice chat audio data, we’d like to hear from you. Also, if you are interested in learning more about our work in Voice Chat Moderation, checkout the following content:

You can also learn about the gamer perspective on in-game voice chat toxicity in our 60-page report with over 40 charts and diagrams. Download now.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read