Hannes Heikinheimo

Sep 19, 2023

1 min read

Blog

Xbox will likely release their second Transparency Report this month. To prepare for the release, this blog post digs into the first Transparency Report, bringing specific attention to the Player Journey and the Moderation Process at Xbox.

We are expecting Xbox’s second Transparency Report sometime this month. That will cover the second half of 2022 and is expected to offer insight into two and a half years of data. However, it is worth looking back at the first report, which began with the statement:

"At Xbox, we put the player at the center of everything we do – and this includes our practices around trust and safety. With more than 3 billion players around the world, vibrant online communities are growing and evolving every day, and it is our role to foster spaces that are safe, positive, inclusive, and inviting for all players, from the first-time gamer to the seasoned competitor."

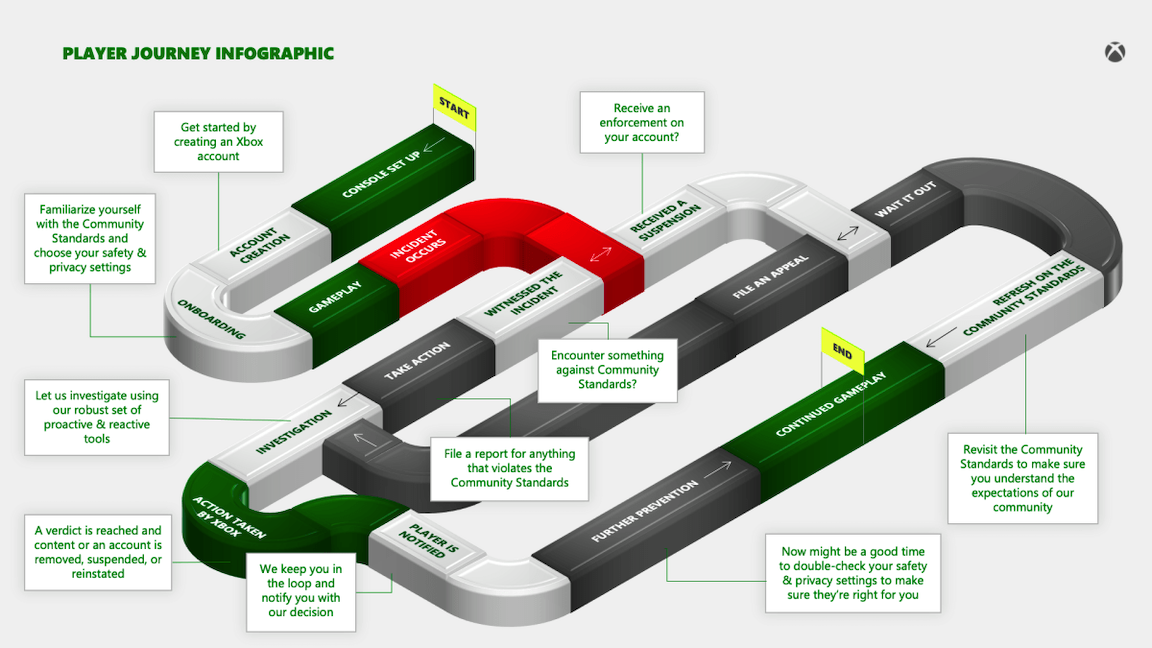

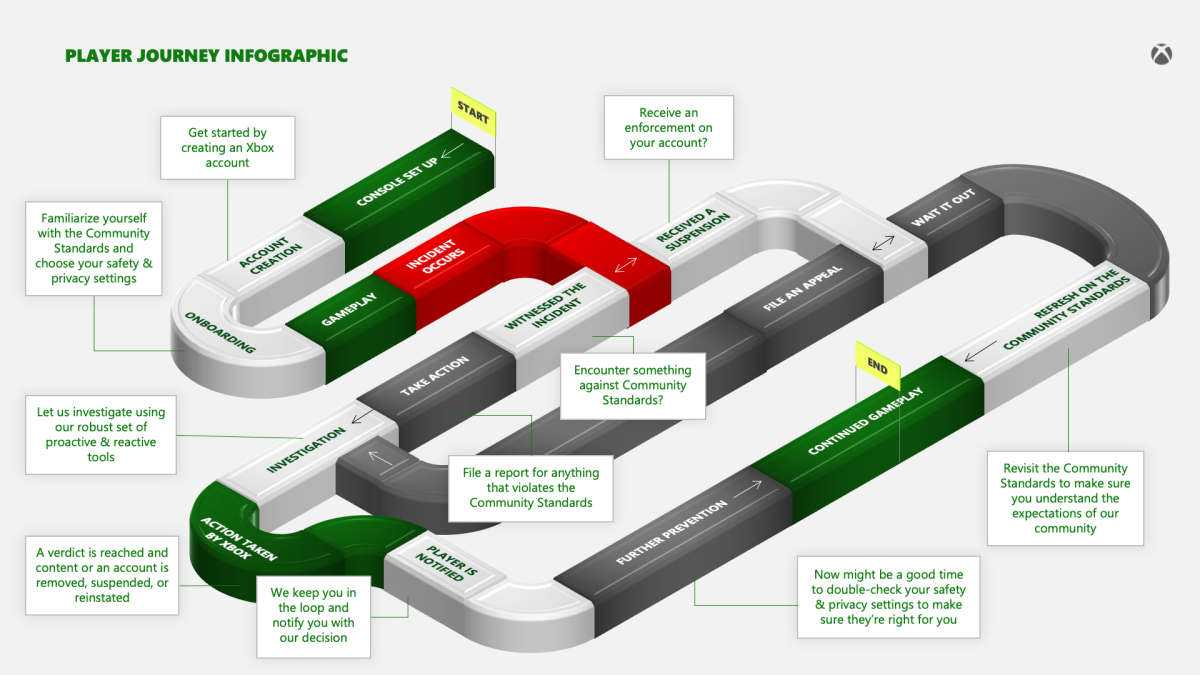

Xbox outlined the player journey at the end of the report but we wanted to highlight this first because it is among the least understood aspects of trust and safety processes for game makers. In a perfect world, the Xbox experience would involve four steps:

The first three steps are done only once, followed by a lifetime of enjoyable gameplay. Of course, that is not reality, and the next step is ominously listed as “incident occurs.” This is followed by branching logic that indicates whether you caused the incident or witnessed the incident.

If you were the cause of the problem, the logic suggests you will receive some sort of enforcement which is indicated as a suspension. Xbox does not indicate that there may be permanent suspensions but focuses on the temporary penalties where the guilty can wait until they have fulfilled their debt to society and then resume gameplay.

Alternatively, the accused can file an appeal which then proceeds to an investigation and a judgment issued by Xbox moderators. The player is notified of the judgment, and if cleared, they can renew gameplay. The diagram does not show the path of a rejected appeal, but most appeals will circle back to the “wait it out” step. Xbox reported that just 6.5% of all appeals in the January to June 2022 period led to an overturned enforcement decision.

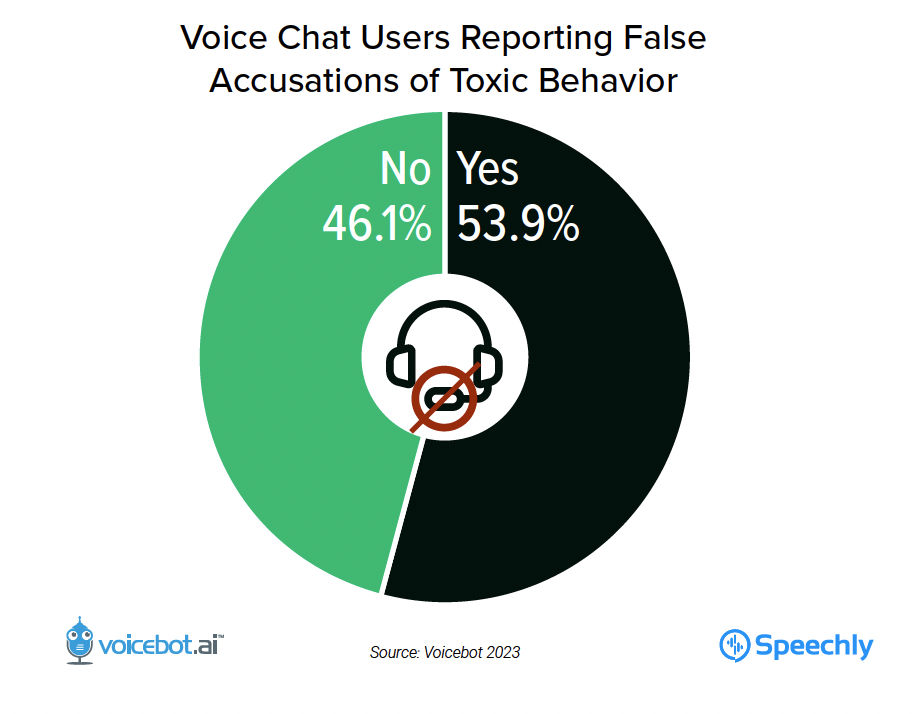

The appeals process is particularly important. A study of online gamer experience by Speechly and Voicebot Research found that over half of players say they have been falsely reported.

Victims or witnesses of these “incidents” can “take action” by filing a report. That is followed by an investigation and judgment by a moderator.

Xbox points out in several places that it has implemented proactive content moderation tools to help “address policy-violating text, images, and video shared by players.” Proactive in Xbox terms means to prevent “players from being exposed to inappropriate content.” That means a suspension or another action can be taken as the result of a player report or an automated identification. According to the report:

"Most often, this comes in the form of removing the offending content from the service and issuing the associated account a temporary 3-day, 7-day, 14-day, or permanent suspension. The length of the suspension is primarily based on the offending content, with repeated violations resulting in lengthier suspensions, an account being permanently banned from the service, or a potential device ban…

At Xbox, violations of CSEAI (child sexual exploitation and abuse imagery), grooming of children for sexual purposes, or TVEC (terrorist and violent extremist content) will result in removal of the content and a permanent suspension to the account, even if it is a first offense. These types of cases, along with threats to life (self, others, public) and other imminent harms are immediately investigated and escalated to law enforcement, as necessary."

Xbox indicates in its support documents that individual games may impose other types of penalties based on their own policies and enforcement mechanisms that are “independent from Xbox.” These are called game-specific suspensions.

The Appeals for Case Review process can lead to an enforcement decision being “confirmed, modified, or overturned.” Xbox says that it investigates every report and that just having a lot of reports against you will not lead to automatic suspension.

A key challenge with these assertions is that it assumes moderators have data to consult during the investigation other than the player report. If the incident involved text chat, the moderator is likely to have evidence to consult in the chat logs. There may also be data associated with gameplay telemetry to support player complaints. And, if the incident occurs in party chat and is reported, Xbox may have a recording available for use during the investigation. However, if it occurs during in-game voice chat, they do not.

This is a key challenge. Voice chat has become a key vector for toxic behavior in online games. However, moderators rarely have audio recordings to consult during the investigation process. This can lead to missed, incorrect, and uneven enforcement.

Very few companies have ever shared their player journey with a moderation process included. It’s as if Xbox assumes every player will face some sort of incident or be the perpetrator. This is a good assumption, given that over half of all players experience toxic behavior in voice or text chat, and there are other channels of violation that push these figures up further.

In our next post, we will break down Xbox’s numbers and how they shed additional light on the nature of the incidents, the company’s process for identifying them, and overall trends.

Transparency reports are based on measuring the problems and explaining the process the game maker uses to address incidents. Speechly can help you measure the problem in order to develop a plan to mitigate the impact of toxic incidents or provide an accurate representation in your transparency report. If you would like to learn more, you can contact us anytime here.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read