Hannes Heikinheimo

Sep 19, 2023

1 min read

We interviewed 20+ experts in the Online Gaming & Metaverse space. Here are the key takeaways for Voice Moderation.

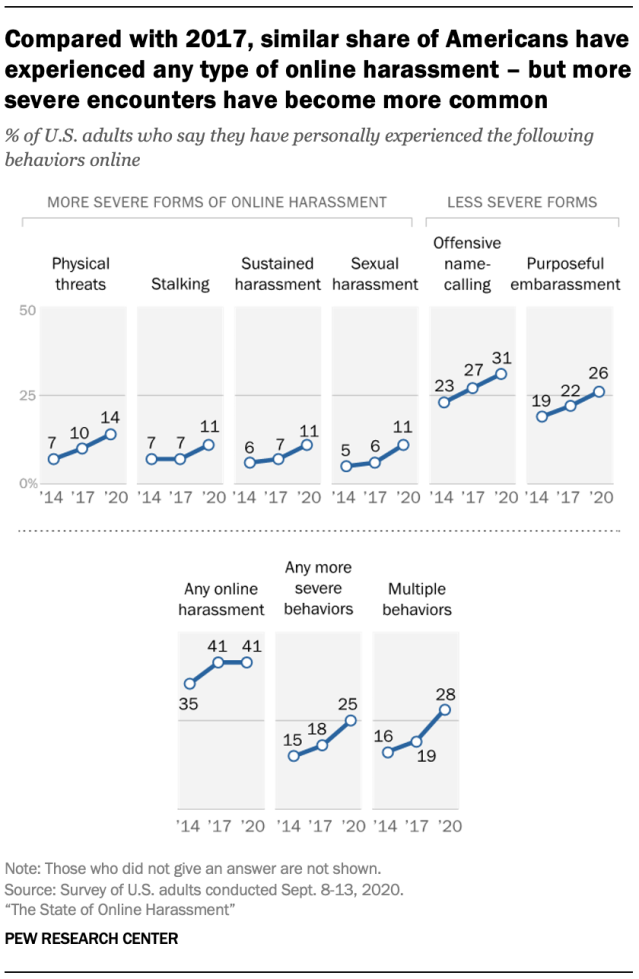

“Roughly four-in-ten Americans have experienced online harassment,” says the Pew Research Center. While the total share of consumers reporting a harassment experience didn’t change between 2017 and 2021, the research organization says, “more severe encounters have become more common.” This includes significant rises in physical threats, stalking, and sexual harassment.

While the overall harassment numbers from Pew were flat at 41% since 2017, users experiencing the more severe behaviors rose from 18% to 25%. Studies show that users actively avoid online games and stop using the games where harassment is common. Metaverse spaces share many characteristics of games and that will invariably include harassment. Oh, and the number one channel for harassment in games is … you guessed it … voice chat.

This presents a problem for metaverse developers. According to the Anti-Defamation League (ADL), “Abusers, who use voice chat in online games to target individuals, often evade detection because the tools and techniques to detect hate and harassment within a game’s voice chat lag behind those that moderate text communication.”

The challenges associated with moderating voice chat range from privacy to the options and timeliness of enforcing policies. These issues also exist for text chat moderation. However, voice chat has two other big issues in terms of accuracy and cost. Voice chat must first be accurately transcribed from speech to text before analysis can take place. Transcription technology has advanced considerably outside the gaming world over the past decade, but it is not optimized for monitoring online harassment in dynamic digital environments.

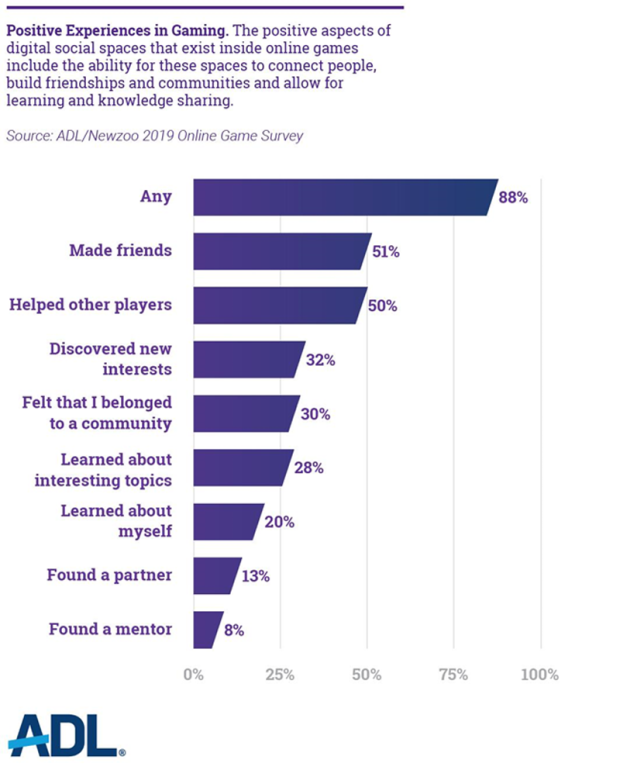

You might think that a simple solution would be just to turn off text and voice chat to avoid these problems. However, that runs counter to the evolution of gaming becoming more social.

Studies show that there are many positive psychological effects of adding social communication to games that enhance the overall experience. Games without chat are at a disadvantage to those with it. This is likely to have an even greater impact in metaverses where gaming is not the central activity. Social connectivity will be an essential feature. That means voice chat moderation must be a top priority for metaverse builders.

An Oxford Academic study from 2007 found that “voice chat leads to stronger bonds and deeper empathy than text chat. As Subspace put it in 2021, “Voice deepens the immersive world, helps forge social bonds, and strengthens online play.”

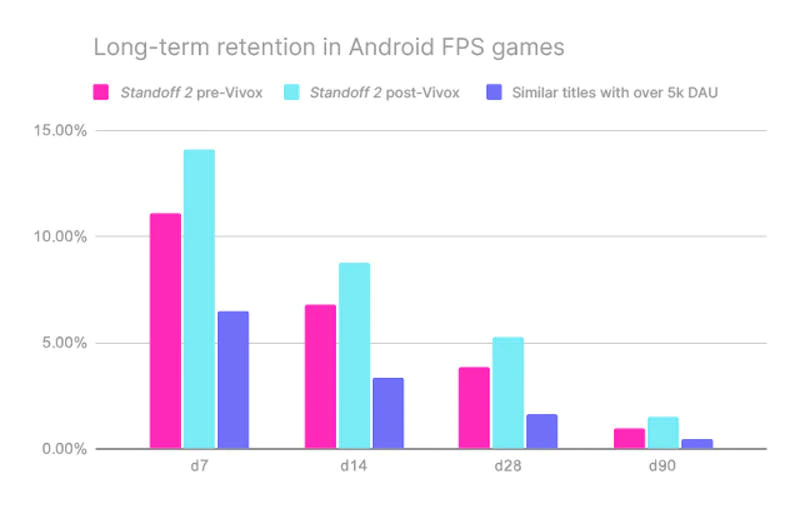

To put more concrete numbers to this, Axlebolt Studios found that 90-day player retention rose by 63% after implementing voice chat. The average revenue per user (ARPU) also rose by 12%. Axelbolt Studio’s Salah Sivushkove told Unity in an interview, “voice chat is unequivocally a must-have mechanic for multiplayer games.”

The ADL revealed concerning results for online gaming in 2021. A national survey found that “Five out of six adults (83%) ages 18-45 experienced harassment in online multiplayer games—representing over 80 million adult gamers.” That figure has risen for three straight years. In addition, “Three out of five young people (60%) ages 13-17 experienced harassment in online multiplayer games—representing nearly 14 million young gamers.”

The ADL’s 2020 report indicated that about half of the harassment took place in voice chat during gameplay. Text chat harassment during gameplay totaled 39% while out-of-match voice chat was again higher in terms of bad behavior at 28% to 22%.

That same year 28% of online gamers avoided specific games due to their reputation for online harassment and 22% stopped playing. By 2021, those figures rose to 30% and 27% respectively. This means there is a very real economic cost of toxic behavior to game makers beyond the human impact.

Riot Games recognized this problem could no longer be ignored for its Valorant title last year. The company announced that it was changing the terms of service and would begin recording and analyzing voice chats. A blog post announcing the change included the comments:

“Disruptive behavior on voice comms is a huge pain point for a lot of players. And we believe one of the ways to combat it is by providing quick and accurate ways to report abuse or harassment so we know when to take action. We also need clear evidence to verify violations of behavioral policies before we take action and to help us share with players on why a particular behavior may have resulted in a penalty.”

Unfortunately, we already know that this isn’t just an issue for online games. A woman in the UK claimed earlier this year that she had been verbally and sexually harassed in Meta’s Horizon Worlds metaverse. She wrote on Medium, “Within 60 seconds of joining — I was verbally and sexually harassed — 3–4 male avatars, with male voices…A horrible experience that happened so fast and before I could even think about putting the safety barrier in place. I froze.”

Motley Fool says, “there are approximately 400 million users of metaverse and metaverse-like worlds. By 2030, Citi predicts there could be up to 5 billion.” Some parts of the online gaming world already fall into that metaverse-like category. They offer insight into how the metaverse spaces will evolve and challenges they are sure to face.

Communication in the metaverse will not be typing and text-first. It will be speaking and voice-first. You are using your hands to navigate metaverse virtual worlds. The only practical way to communicate most of the time is by voice. Otherwise, you wind up with a start-and-stop experience where you are constantly waiting for someone to finish typing or stop moving so they can type and communicate. Even non-gaming metaverse experiences are subject to these interactive dynamics.

Moreover, the need for policing may not be limited to humans in the metaverse. Recall Microsoft’s infamous Tay chatbot deployed to Twitter. “Microsoft is battling to control the public relations damage done by its ‘millennial’ chatbot, which turned into a genocide-supporting Nazi less than 24 hours after it was let loose on the internet,” said an article in USA Today.

Many metaverses are deploying non-player characters (NPCs), and some of those have learning engines that could eventually generate conversations that stray from the normal script and head into “Microsoft Tay territory.” This would be a disaster because the metaverse wouldn’t even be able to assign the blame to a user. The question for metaverse builders is how to manage the risk.

The key issues of moderating voice chat boil down to accuracy and cost. Both relate to the conversion of Speech to Text that takes place before any analysis can be conducted. If the transcript is inaccurate, then you risk missing harassing behavior or mistakenly labeling non-harassing voice chat as toxic and alienating an innocent user. Neither of these outcomes are good for users or the metaverse community.

Speechly recently participated in the famous Y Combinator tech startup accelerator. Over the course of that program, Speechly leaders had the opportunity to interview executives at 70 companies and ask them about their current challenges. Several specific themes around Speech to Text accuracy emerged as important.

We also learned that the Speech to Text solutions are too costly for many games and metaverses. While offerings from Google and Amazon are easy to access, their cost can be prohibitive at scale. The transcription is an added cost beyond what you encounter with text chat and can exceed $10,000 per day even for relatively small user bases of under 100k DAU.

It is a difficult situation for game and metaverse builders. They need to have voice chat as a feature. It is essential that voice chat is moderated. The cost of traditional Speech to Text transcription solutions is too high to be economically viable. Something has to give.

At Speechly, we have worked alongside select partners to address the challenges around Accuracy and Cost with Speech to Text when using it for Voice Chat Moderation. With our API, developers have the ability to train Speech to Text models for their specific use case rather than using a generalized model. This helps create Voice Moderation that is accurate for the words that matter for the use case at hand.

Speechly also can be deployed On-Device or On-Premise. This results in a more cost-effective and private solution. Running Speech to Text On-Device or On-Premise can be up to 100x cheaper than the cloud. Also, this option is private by design as the Voice Chat data is never required to leave the users device or your company's data center.

If you are interested in learning more about how to use Speech to Text technology for Voice Chat Moderation, contact us to become a partner!

Metaverse adoption is taking off. With more than 400 million users today and the expectation of five-to-tenfold growth, every problem is going to require a scalable solution. Gaming has provided metaverse builders with an early warning about what to expect. Voice chat will be a key feature for metaverse spaces but it comes with the risk of harassment and toxicity. Those risks have very real consequences in terms of user adoption, experience, and retention. The significant challenges in implementing voice chat moderation that go beyond text chat is a complicating factor. However, there are next technologies and techniques arising just in time for the Cambrian metaverse explosion.

Cover Photo by Jessica Lewis on Unsplash

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read