Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat is very popular with both users and the creators of games, social media platforms, and metaverse spaces. People get more out of the experience when they connect directly with other users, and their behavior shows this through longer sessions, more frequent usage, and higher retention. Those same metrics are attractive to platform and application creators because they drive higher average revenue per user (ARPU).

However, the introduction of voice chat comes with the risk of harassment for users. The Anti-Defamation League (ADL) reported in 2021 that as much as 27% of gamers who were subject to online harassment in a game stop playing. Similar patterns are emerging for social and metaverse applications. So, there is a dilemma. Users like voice chat. Application providers like the user metrics that voice chat delivers. At the same time, harassment delivered through voice chat can undo many of those benefits and undermine a brand’s reputation.

The solution to this problem is not a secret. Moderation has been a hot topic in social media and gaming for nearly two decades. The difference is that most moderation techniques have developed around text moderation or asynchronous communication. Voice is real-time, can be costly to convert to text accurately, and manifests different problems.

Social media, along with some games and metaverse virtual worlds, also support user-generated audio and video content sharing, which can be vectors of toxic behavior and harassment. And there is the issue of brand safety if the space is ad supported. All of these scenarios require accurate, fast, and cost-effective solutions that efficiently convert voice content into text so analysis can identify harassment and other problematic content.

We interviewed 20+ experts in the Online Gaming & Metaverse space. Here are the key takeaways for Voice Moderation.

Voice Chat is the most recognized risk vector where proactive moderation is needed. It is also the hardest to monitor and often the most personal for the victim. Users that exhibit toxic behavior or engage in harassment through voice chat are often targeting an individual or group. The victims are not collateral damage. They are the intended target for abuse. That means the speed of response can really make a difference.

The ADL’s 2020 report on online harassment in games indicated that about half of the harassment took place in voice chat during gameplay. Text chat harassment during gameplay totaled 39% while out-of-match voice chat was again higher in terms of bad behavior at 28% to 22%.

Given that voice chat is real time communication, it requires a solution that transcribes conversations quickly, so you identify and intervene before toxic behavior escalates. The transcription also must be accurate. Otherwise, you may face too many false positives where you reprimand innocent users or too many false negatives where you miss the toxic behavior altogether. Both of these outcomes negatively impact users.

Finally, there is the issue of cost. Transcribing all voice chat through a cloud service quickly becomes very expensive. The result is that many applications and platform providers avoid full coverage with voice chat monitoring and recording. Instead, most moderate voice chat only on an exception basis which makes it harder to identify perpetrators and protect users from abuse. There are new, more economical solutions to this problem but awareness is limited. These factors make voice chat both a significant risk vector for abuse and more challenging to moderate than text-based communications.

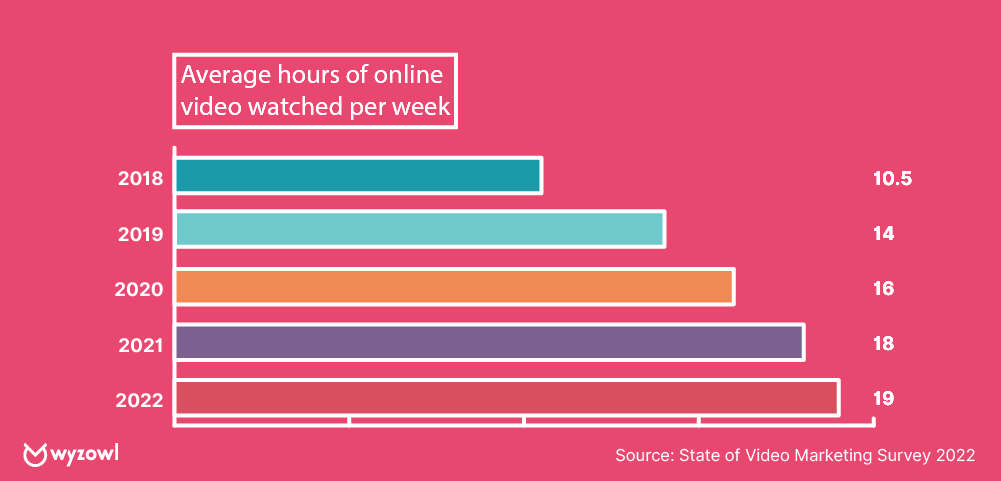

Allowing users to add video and audio content is a core use case of social media. These formats are actively encouraged as they are known to generate more user engagement and session time. Consumer video consumption has risen steadily in recent years. According to a report by Wyzowl, consumers watched an average of 10.5 hours of video per week in 2018. That figure rose to nearly 20 hours as of 2022.

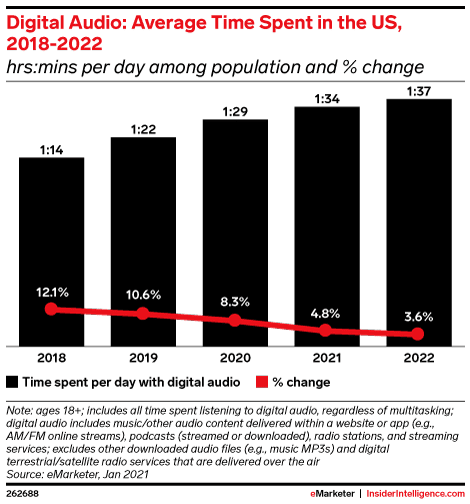

eMarketer data show an upward trend in online audio consumption as well. Digital audio listening grew from 1 hour and 14 minutes per day in 2018 to 1 hour and 37 minutes in 2022.

Of course, this presents a challenge. How do you know when a user uploads a video or audio that it does not contain objectionable audio content? The obvious answer is to conduct similar moderation steps as you would for voice chat. Transcribe the audio and then run it through your moderation text-analysis tool.

The question is how many application providers are actually doing this effectively. Many wait for user complaints about the offending material before reactively assessing the content. However, you can do this today by proactively transcribing the audio and scanning it for objectionable material. This may spare many users from being subjected to offensive material before the reactive, complaint-led model kicks in. Wouldn’t it be better to quarantine items and address these issues before they become problems?

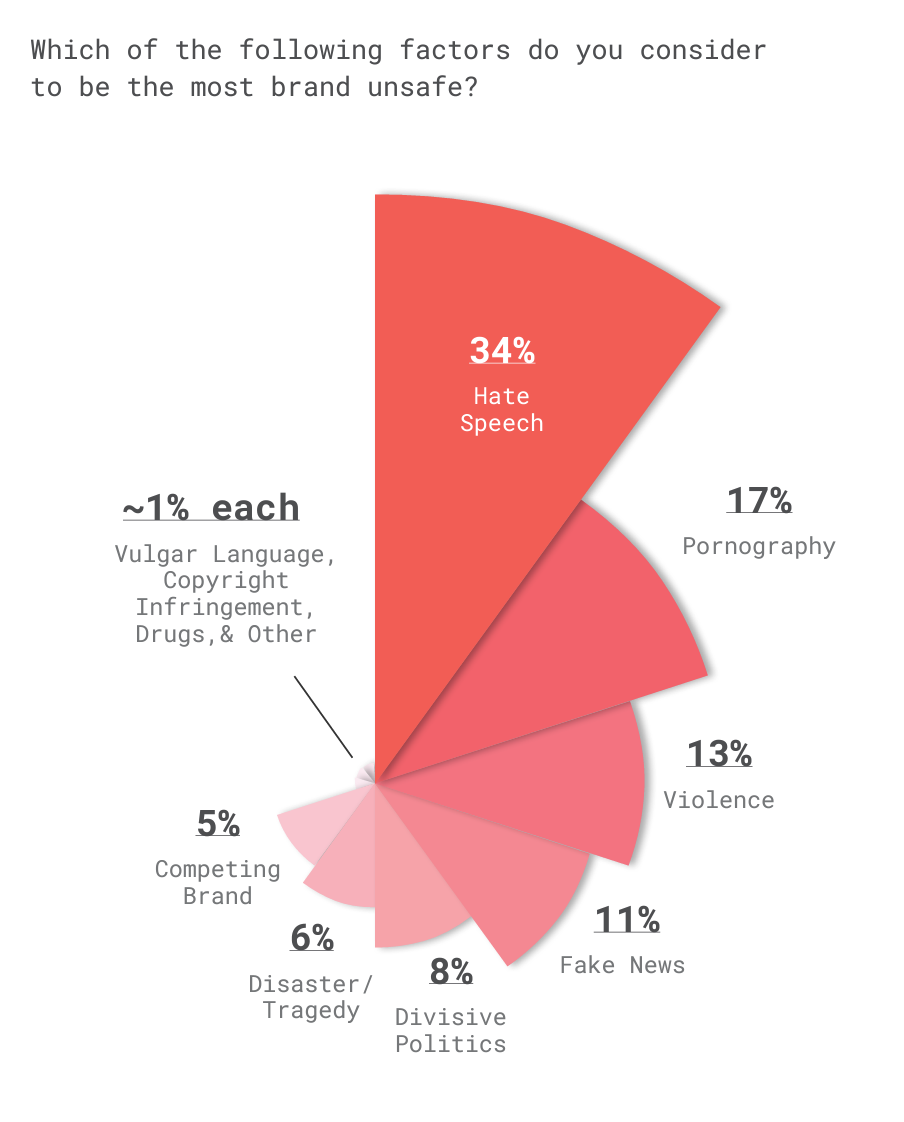

This is the least understood of the voice moderation vectors but may become one of the most important, particularly in ad-supported social media and metaverse environments. A report from GumGum and Digiday Media found that, “75 percent of brands reported at least one brand-unsafe exposure. And it’s not all about reputation and social media backlash: These incidents can do profound damage, leading to brand confusion and, in extreme cases, loss of revenue.” The top issue cited by advertisers was hate speech.

From terrorist messages and the war in Ukraine to concerns about COVID misinformation, politically charged topics, and juxtaposition to hate speech, brand safety is a rising concern among advertisers. More than two-thirds of companies say they have faced a known brand safety issue and 70% of marketers are taking the matter seriously. In 2017, JP Morgan Chase famously reduced their advertising from over 400,000 websites to just 5,000 to reduce brand safety risk.

To identify hate speech or reference to controversial topics in user generated content, you need to have cost-effective automated transcription that takes context into account. Context is very important because keywords alone don’t indicate whether the content is being presented in a problematic way. Moderation techniques applied to an accurate and timely transcript of the audio content can offer brand safety assurance while also protecting users from objectionable material.

Content moderation practices have emerged from a reactive model. After someone flags content as objectionable, mitigation steps are taken. That flag sometimes originates from an internal moderation team. Very often, the flag comes from a user in the form of a complaint. In both cases, many users are typically subjected to the offensive material before it is removed.

Proactive moderation of real-time and recorded audio content is uncommon largely because the technology required has historically been inadequate to effectively address the problem, and it was also prohibitively expensive. That is changing. New speech recognition technologies are more accurate, faster, and can be delivered more cost effectively than in the past. This situation presents an opportunity to implement automated and proactive moderation. The result will be fewer negative incidents impacting users, their experience, and the brand’s reputation.

The difference between voice and chat moderation is not widely understood. As you can see, the differences don’t end with conversations. You may allow users to submit short or long form text that you can proactively scan for objectionable user-generated content. This process becomes more complicated when that content is audio or video. The impact ranges from offending users to advertisers. There are also situations where you can generate false positives that will undermine your relationship with users. Neither of these scenarios is desirable.

Of course, we are not writing this just so people will know about where voice moderation is needed. Speechly has developed technology specifically for voice moderation that meets the demands of high accuracy, speed, and low cost. For the first time, this will enable application and platform providers to proactively monitor all voice chat and recorded audio. This proactive stance can avoid user problems, reduce complaints, and generate a stronger overall business. Reach out to our product team if you would like to learn more.

Photo by Pavan Trikutam on Unsplash

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read