Hannes Heikinheimo

Sep 19, 2023

1 min read

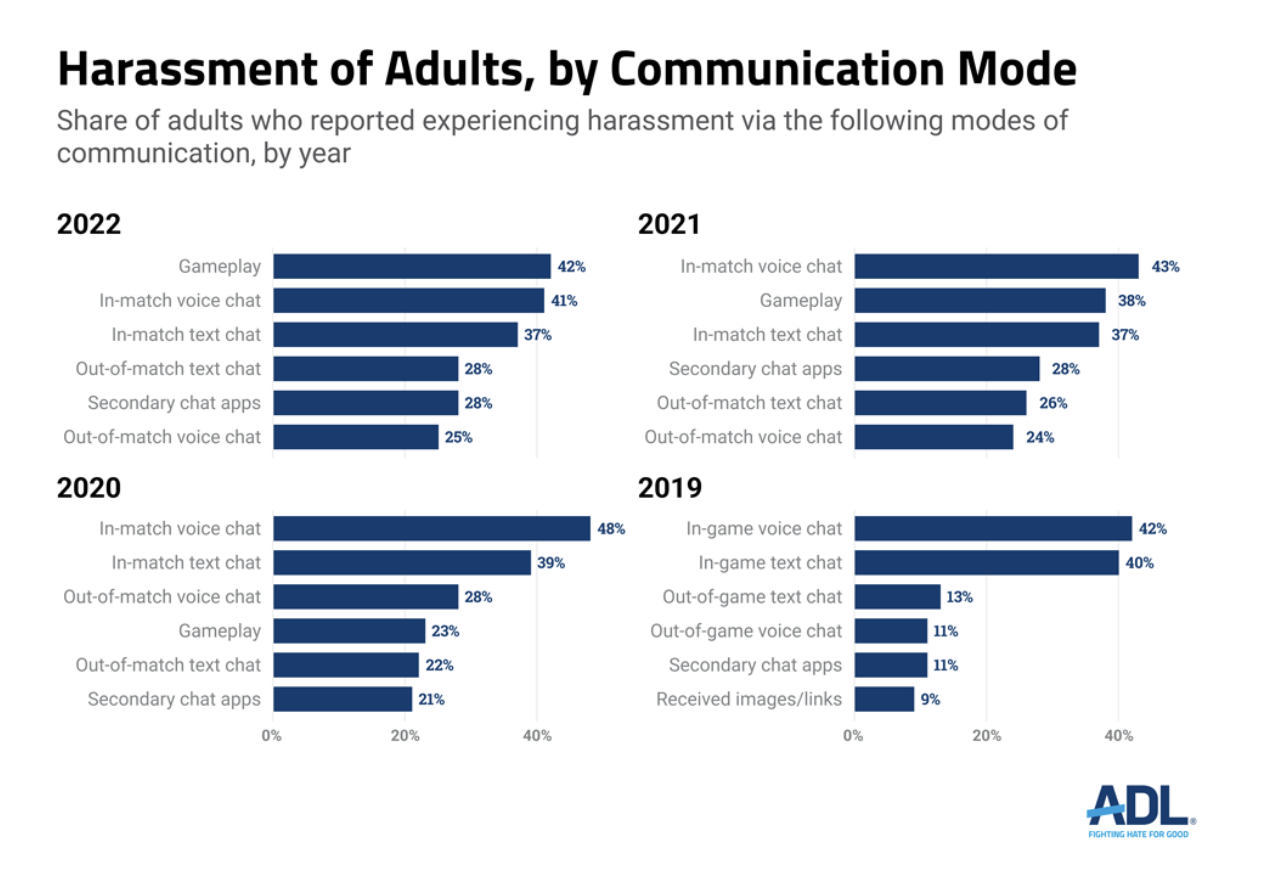

While ADL’s annual report about harassment in multiplayer games showed a significant problem worsening, it also highlighted that voice chat is once again a leading channel for these incidents. In-match voice chat has consistently been cited by over 40% of U.S. adults as a source of toxic behavior. It was the top channel for harassment in 2019-2021 and fell just one point behind Gameplay in the 2022 survey.

In-match voice chat also notably exceeds the reports of in-match text chat for each of the survey years. This is consistent with other primary research data Speechly has reviewed. One reason we suspect that voice chat harassment exceeds text chat is that games are far more likely to have automated tools to mitigate the impact of the latter.

The ADL survey differentiates between in-match and out-of-match voice chat channels. It finds that out-of-match voice chat is not quite as toxic but is still an issue cited by one-in-four gamers.

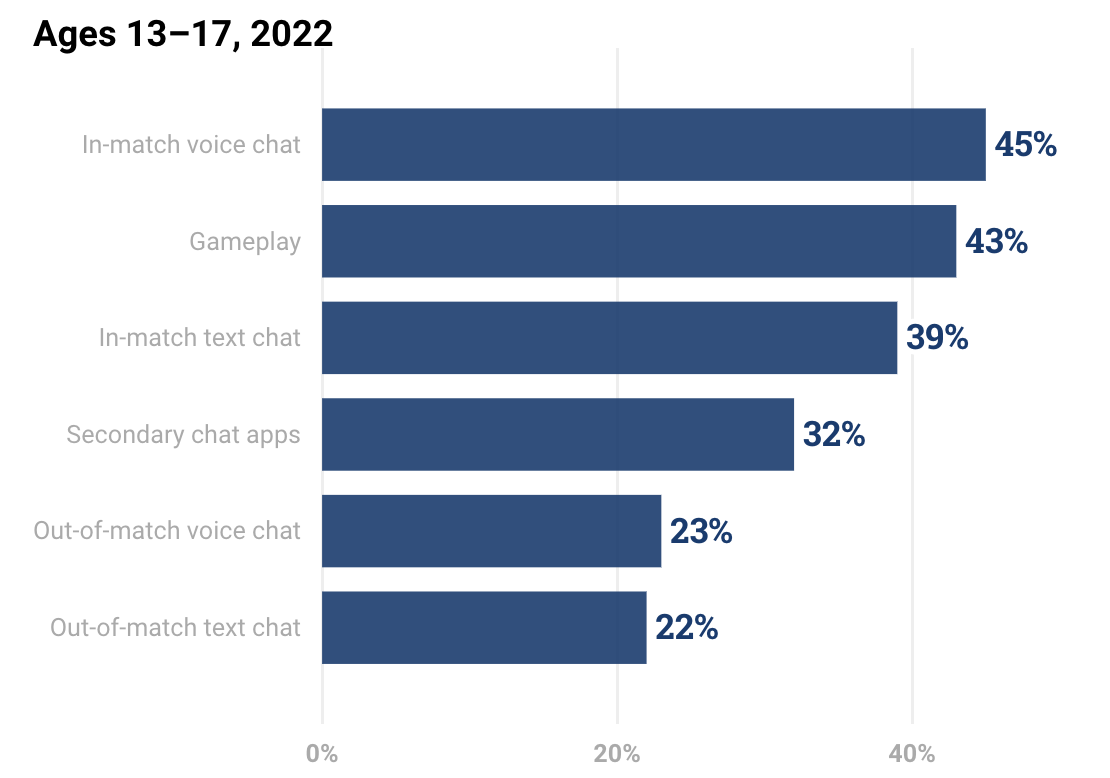

ADL data also show that voice chat is the leading channel for harassment of 13-17-year-old kids while playing games. Forty-five percent of kids responded that they had been harassed in voice chat, compared with 43% for gameplay and 39% for text chat. Gameplay did not change from the 2021 report, but voice chat rose six full percentage points. Text chat incidents rose more modestly.

In 2022, ADL also included data for 10-12-year-old children. Fifty-one percent say they had experienced harassment through in-match text chat, 46% through gameplay, and 41% for in-match voice chat. It may be that younger players are less comfortable making harassing statements via voice chat, or fewer are allowed to use voice chat while playing games. Regardless, the presence of harassment in online games is substantial across channels and age groups.

Consumer surveys are beginning to paint a more accurate picture of how widespread harassment is in online games. Most games today use complaint-led reporting for voice chat harassment. Speechly has found that about 70% of players that have experienced toxic behavior in a game’s voice chat have never reported an incident. Even the victims that have reported incidents have not reported every incident.

Game makers have very low visibility into the breadth and depth of these issues. Reports such as ADL’s and another that will be published in February offer much-needed insight.

Many game makers do have visibility into harassment that takes place during gameplay. Even if they don’t regularly monitor these incidents, they typically can assess them by reviewing log data. Similarly, many game makers have at least basic filtering tools for text chat, and some are assessing context after complaints are submitted. This doesn’t necessarily surface the extent of the problem, but the data is available to go into a deeper analysis, and some game companies do this regularly.

Voice chat in gaming is generally a black hole for data. Few game makers today are recording voice chat audio, fewer still are transcribing the chats, and even fewer have the means to algorithmically analyze the data when it is available. This has led to a voice chat moderation gap that appears to be growing.

Game makers tell us that voice chat is important for improved gameplay experience, session frequency, and player retention. Industry data back up these contentions. However, voice chat also presents a significant risk factor. When harassment does occur, players reduce play, change their gameplay behavior, and some abandon specific games altogether.

Granted, there are technical and cost hurdles for recording voice chat audio, transcribing the conversations accurately, and analyzing it effectively. Speechly was recruited by several game makers to help overcome these obstacles. Reach out to our product team if you would like to learn more.

Also, if you would like to read a more detailed breakdown of these challenges, I recommend you check out some of our earlier blog posts on these very topics.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read