Hannes Heikinheimo

Sep 19, 2023

1 min read

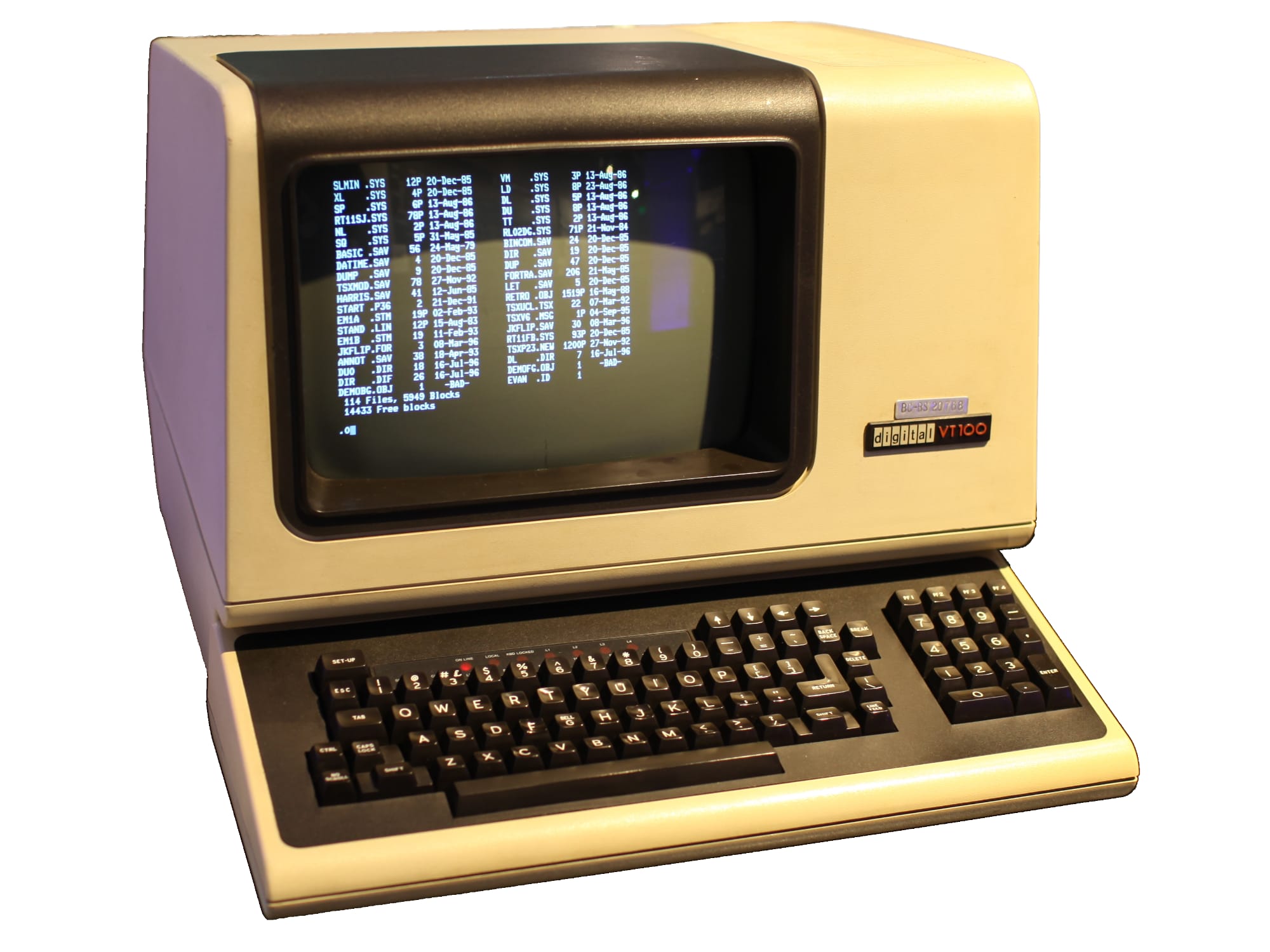

Back in the early times of digitalization, human-computer-interaction meant white (or green!) box blinking on a black screen. We have come far from that. First revolution came in the form of mouses on graphical user interfaces (GUI), then with touch and mobile phones. Now we are entering the era of voice user interfaces (VUI). What does it mean from a UI designer's point of view?

In this blog post, I'll share you some do's and don'ts you should consider when designing a voice user interface. You can find more tips for voice design in our guide.

Conversational AI is a hot buzzword, but people most often don't want to conversate with their computers. Most if not all of us have better friends, even though we do spend more time with our computers and mobile phones than any of them.

Most often conversational AI refer to chatbots. Google defines chatbots as "a computer program designed to simulate conversation with human users, especially over the Internet". These are voice user interfaces for sure, but most often you don't need the bi-directional "talking" that these chatbots offer.

Most voice user interfaces are based on a simple question-answer pattern. The user asks something, the system waits and then something happens. Often this something is voice assistant answering back in speech.

This is a problem, as voice is an interrupting channel. If you've ever had a conversation, you'll know that both parties should not speak at the same time. This leads into a noise where neither understands each other.

However, we humans can easily speak and digest visual information simulatenously. When designing voice UIs, this should be taken advantage of.

When the user says something, the user interface should continously update to reflect the user commands. For example, if the user says something like "Show me red t-shirts from Hugo Boss in size large", the UI can be updated three times: first showing t-shirts, then showing t-shirts from Hugo Boss and last showing large t-shirts from Hugo Boss.

This encourages the user to continue with the voice experience and enables the user to correct themselves naturally.

Transcript is the most important feedback that the user needs when using a voice UI. If the transcript is not shown, the user can't be sure whether they are being listened at all. And most importantly, without the transcript there's no way for the user to understand why they were not understood correctly, if that's the case.

Always show the transcript in the field of vision. When the user activates the microphone, the transcript should be clearly visible in a natural place, either close to the microphone or in the top of the screen.

One big issue with voice assistants is that it's impossible for the user to know what they can and can't achieve with the device without trying.

If you ask the assistant to give an alert, that works great. They also know when Michael Jackson died. But does a Google smart speaker know how many steps you took yesterday on Google Fit? It seems no. There's no way a user can know it beforehand, because the smart speaker doesn't give any visual tips on what is possible.

In a real-world application one simple way where this works great is a form. Let's say you want to book a flight and you are presented with a form that has an input fields for from, to, date, class and some other information.

Now it's very clear for the user what they should say and what is the context in which they should be commanding the system.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read