Hannes Heikinheimo

Sep 19, 2023

1 min read

Blog

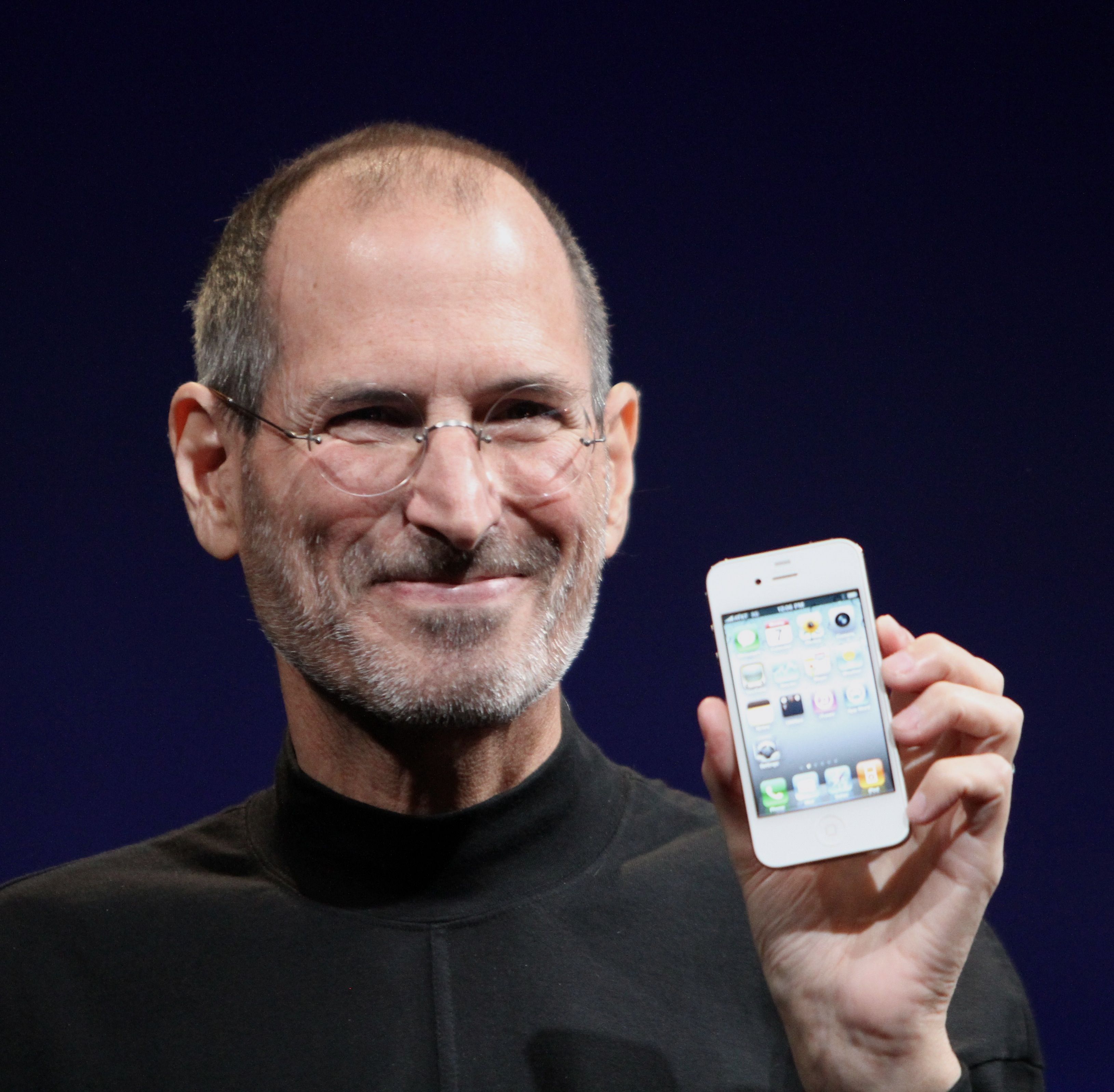

The extremely fast feedback that the iPhone touch screen experience provided to the user, resulting in a very responsive and intuitive user experience is still missing from current voice user interfaces.

There is a lot of positive momentum around voice interfaces. We’ve all seen the stats: the number of smart speakers in US households has risen steadily for the past five years. The share of voice searches of all search engine traffic has soared. Yet, the type of revolution that the touch screen gave birth to, after the launch of the iPhone, hasn’t really happened for voice — despite all the hype. Why is that?

Anyone who has a bit of experience from using voice interfaces knows that you can do simple things like setting on the alarm, switching on the lights, or playing your favorite music on Spotify pretty easily. However, if you try to do anything more sophisticated, say, order pizza for your eight best friends, reserve an intercontinental flight for a family of five, or buy a new party dress online, the chances are that you're going to fail miserably. For more demanding and for most real-world tasks, the user experience with voice just isn’t there yet — at least as it is for the touch screen.

Still, contrary to what people might think, the problem is not really anymore in speech recognition or natural language understanding accuracy. Even for fairly open-ended domains, both speech recognition and natural language understanding accuracy is pretty close to human parity.

The problem lies rather in the way these systems give feedback to the user. Typically, when a voice command is uttered, the modern voice assistants wait until the user has stopped talking (using the technique called endpointing) before they start processing it. This works great for short things like “turn on the lights” or “Play X on Spotify”. However, for more complex tasks this is a disaster.

Imagine if you need to express something that requires a longer explanation. When looking for a new t-shirt, a person might be tempted to say something like “I’m interested in t-shirts for men ...in color red, blue or orange, let’s say Boss ...no wait, I mean Hilfiger ...maybe size medium or large ...and something that’s on sale and can be shipped by tomorrow ...and I’d like to see the cheapest options first.”

When uttering something this long and winding to a traditional voice UI, most likely something will go wrong, resulting in the familiar, “Sorry, I didn’t quite get that.” Having just made the extended effort of explaining your intent to the system, this is an extremely frustrating experience. Or even worse, the endpointing might trigger a false positive half way the utterance, causing the voice assistant to prematurely resolve the intent and start a voice synthesis response, interrupting the speaker violently and irritatingly.

In the following sections of this article, we will introduce the powerful techniques of streaming spoken language understanding and reactive multi-modal voice user interfaces that address the very problem the current generation of voice UIs suffer from.

Contrary to the traditional voice systems that rely on endpointing to trigger a response, the systems using streaming spoken language understanding actively try to comprehend the user intent from the very moment the user starts to talk. The idea is that as soon as the user says something actionable, the UI is able to instantly react to it.

The benefit here is that if the system does not understand the user, the UI will instantly signal this back. This way the UI will fail fast but also recover quickly as the user can immediately stop, correct, and continue. On the other hand, if the system does understand the user, also this information is conveyed immediately. This gives reassurance to the user that their message is going through, and that they can continue their expression. As long as the system understands, the user can just go on and on, which results in longer and more complex utterances that are supported.

Moreover, the immediate feedback from both the small failures and successes of the UI can be combined in a way that the users can correct either themselves or the UI in an online manner, e.g., “I’m interested in Boss, no, I mean Tommy Hilfiger”. This ushers a way for the UI to not only support more sophisticated and complex UI workflows but also a more stream-of-consciousness way of expressing the users’ intent. This is more natural for humans and requires much less effort than the very specific way that the current voice UIs require the utterances to be given.

The most prominent feedback modality of the current day voice interfaces is voice synthesis. As a feedback mechanism, however, this works poorly as any ongoing user utterance will be abruptly interrupted — a problem exhibited commonly in the contemporary voice UIs. As a feedback mechanism, voice is also a pretty narrow band. Instead, the feedback should be given with a non-interruptive modality. Such modalities include haptic, non-linguistic auditory, and perhaps most naturally and expressively, visual feedback. Using these modalities, the UI can react fast and without interruption to the user. For instance, in the case of “I’m interested in t-shirts,” the UI would swiftly show the most popular t-shirt products, instantly enabling the user to continue with a refining utterance, ”do you have Boss.” This narrows further down the displayed products to show only the Boss branded t-shirts.

This iteration loop reminisces a familiar setting to everybody: human face-to-face communication, which is, in effect, a reactive, multimodal communication setup. In fact, it is a common misperception that human face-to-face conversation is primarily turn-based (or half-duplex) in a similar fashion that chatbots or voice assistance are. Meaning, first I say something, then you say something, then I say something again, and so on. Not exclusively. The human face-to-face conversation is very much full-duplex: as one person talks, the listener gives feedback with nods, facial expressions, gestures, and interjections like aha and mhm. Furthermore, if the person listening doesn't understand what is being said, they are likely to start making more or less subtle facial expressions to signal their lack of comprehension. This is the tight full-duplex feedback loop that makes human face-to-face communication so efficient. The same efficiency is exhibited in the reactive multi-modal voice user computer interfaces!

At the height of the voice assistant hype ushered in by Amazon Alexa, many probably heard the flying phrase “Voice is the UI!”. This article disagrees. Voice is a modality! By augmenting a UI with voice in combination with other available modalities, the result can be an extremely efficient UI.

The perfect interface is when you can use touch and voice seamlessly and choose the best option for the context, sometimes interchangeably

This efficiency comes from how the modalities work together, not from voice alone. Voice is, for instance, a very efficient method for inputting rich information. For scrolling, swiping, pointing, or selecting between a couple of valid alternatives, touch is probably better. For displaying complex multidimensional information, the visual display is unbeatable. Combining all of these modalities in a smart way is the killer app.

Circling back to where we started, the iPhone moment. What made the iPhone so powerful? Well, the very intuitive swipe and pinch gestures with which the user could effortlessly control their phone. However, when the iPhone came out, the touch screen wasn't a new thing. There had been prior touch screen devices. They just sucked! You could swipe or press, and a second or two later something would happen.

What the iPhone brought to the mix was the extremely fast feedback that its touch screen could provide to the user, resulting in the very intuitive, fluid, and satisfying user experience of controlling your phone. Voice UIs based on streaming spoken language understanding are a similar type of revolution. The streaming spoken language understanding offers extremely fast, fluid, and intuitive feedback to the user akin to what the iPhone brought to the controlling devices back in 2007. However — this time, voice is in the center, providing user experiences that we haven’t seen before, ushering the iPhone moment at last for voice as well.

Speechly is a YC backed company building tools for speech recognition and natural language understanding. Speechly offers flexible deployment options (cloud, on-premise, and on-device), super accurate custom models for any domain, privacy and scalability for hundreds of thousands of hours of audio.

Hannes Heikinheimo

Sep 19, 2023

1 min read

Voice chat has become an expected feature in virtual reality (VR) experiences. However, there are important factors to consider when picking the best solution to power your experience. This post will compare the pros and cons of the 4 leading VR voice chat solutions to help you make the best selection possible for your game or social experience.

Matt Durgavich

Jul 06, 2023

5 min read

Speechly has recently received SOC 2 Type II certification. This certification demonstrates Speechly's unwavering commitment to maintaining robust security controls and protecting client data.

Markus Lång

Jun 01, 2023

1 min read